Fragmentation in AI Governance Is the New Normal. Time to Embrace It

Global AI governance is no longer the goal, and 2025 made that clear. With the EU, China, U.S. states, and dozens of nations building incompatible AI rules, fragmentation is not a failure. It’s the new reality, and the companies that learn to navigate it will shape AI’s future.

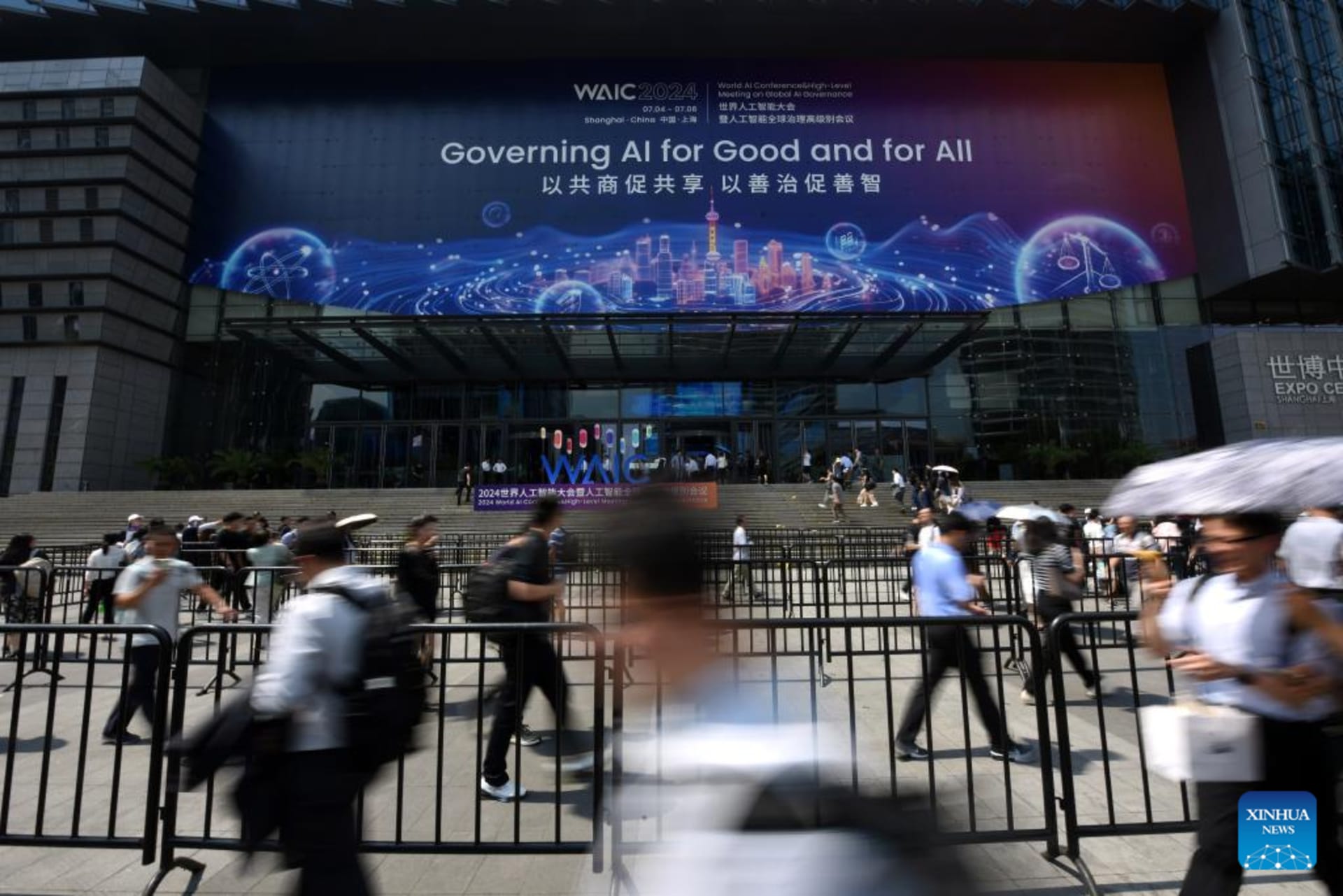

When it comes to governing AI’s adoption—as opposed to infrastructure like chips or data centers—the action is quietly shifting from aspirational endeavors to pragmatic realities. If you’re still waiting for “global AI governance” to arrive from high-level summits or broad multilateral frameworks, I’m afraid you may be waiting for a long time. In the future, 2025 will be remembered as a pivotal year that shifted the AI debate away from the ideal of transnational coherence and toward the reality of regulatory fragmentation.

For years, the AI governance discourse has been defined by abstract policy questions: How do we prevent algorithmic bias? How do we ensure transparency? How do we manage concentrations of power? How do we maximize beneficial impacts? These are important questions, and they’re being asked, often thoughtfully, in government agencies, think tanks, universities, and international forums.

But as generative AI evolves from the theoretical capabilities identified in models to the harsh realities of implementation within markets, AI governance will increasingly emerge where the technology actually gets deployed: in the financial systems managing credit decisions, the pharmaceutical labs developing diagnostics, the city governments determining investment priorities and the medical providers reimagining patient care. This is not where most discussions about AI governance currently focus.

As with all innovation, AI’s transformational potential will only be unlocked through adoption at scale. Such adoption will reflect specific use cases—not a one-size-fits-all “AI world.” Abstract policy debates will and should continue, but at the same time, AI’s impacts on human beings’ lives will emerge in the concrete, unglamorous work of AI deployment within centralized systems that serve large, societal needs. Such enterprise-level adoptions may represent the best opportunity to determine whether AI will scale beneficial innovations or widespread discrimination, democratic principles or authoritarian controls. At the very least, they merit close attention.

This article appears in part. To read the full piece, visit World Politics Review.