Automation, Productivity, and Growth

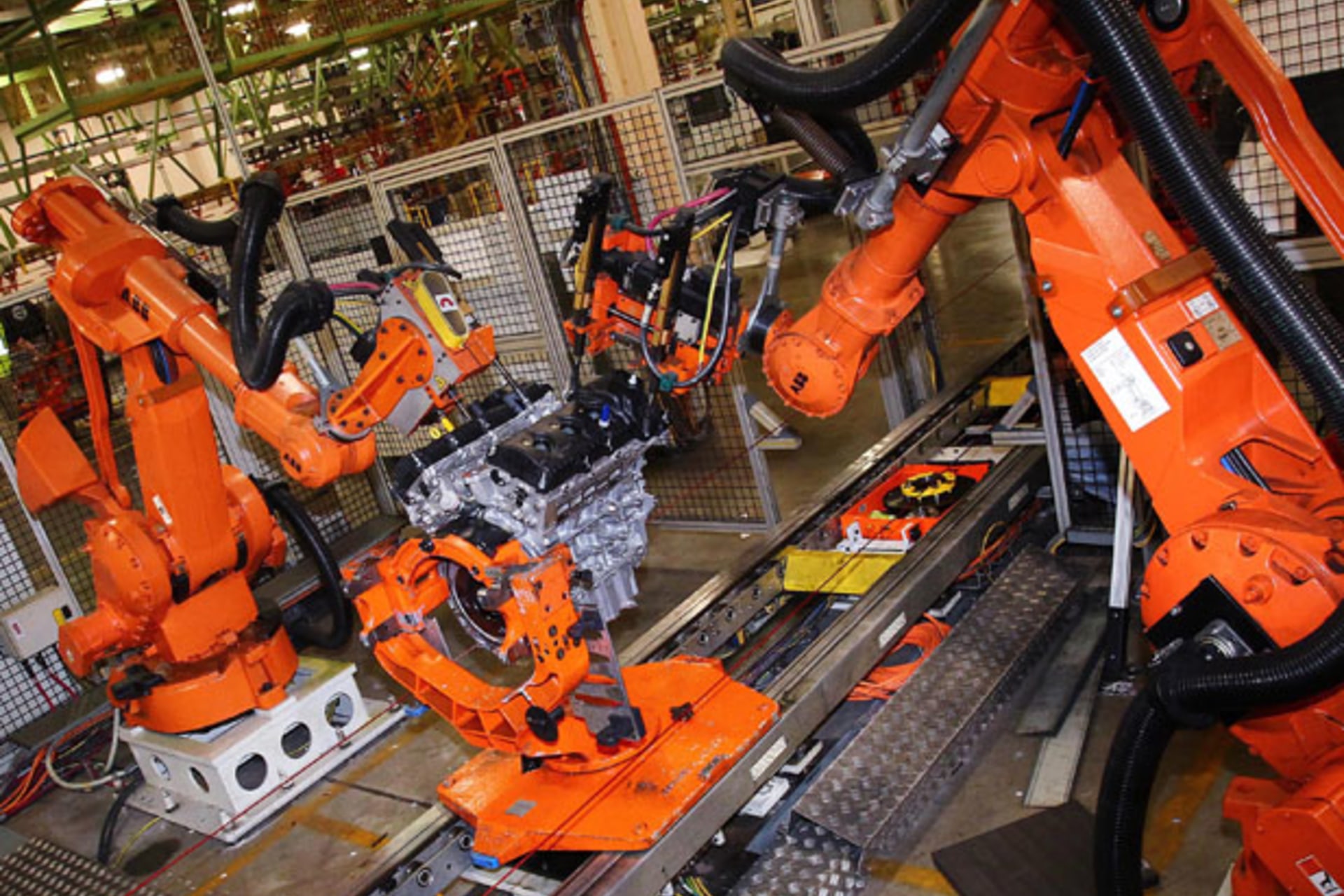

It seems obvious that if a business invests in automation, its workforce – though possibly reduced – will be more productive. So why do the statistics tell a different story?

In advanced economies, where plenty of sectors have both the money and the will to invest in automation, growth in productivity (measured by value added per employee or hours worked) has been low for at least 15 years. And, in the years since the 2008 global financial crisis, these countries’ overall economic growth has been meager, too – just 4% or less on average.

One explanation is that the advanced economies had taken on too much debt and needed to deleverage, contributing to a pattern of public-sector underinvestment and depressing consumption and private investment as well. But deleveraging is a temporary process, not one that limits growth indefinitely. In the long term, overall economic growth depends on growth in the labor force and its productivity.

Hence the question on the minds of politicians and economists alike: Is the productivity slowdown a permanent condition and constraint on growth, or is it a transitional phenomenon?

There is no easy answer – not least because of the wide range of factors contributing to the trend. Beyond public-sector underinvestment, there is monetary policy, which, whatever its benefits and costs, has shifted corporate use of cash toward stock buy-backs, while real investment has remained subdued.

Meanwhile, information technology and digital networks have automated a range of white- and blue-collar jobs. One might have expected this transition, which reached its pivotal year in the United States in 2000, to cause unemployment (at least until the economy adjusted), accompanied by a rise in productivity. But, in the years leading up to the 2008 crisis, US data show that productivity trended downward; and, until the crisis, unemployment did not rise significantly.

One explanation is that employment in the years before the crisis was being propped up by credit-fueled demand. Only when the credit bubble burst – triggering an abrupt adjustment, rather than the gradual adaptation of skills and human capital that would have occurred in more normal times – did millions of workers suddenly find themselves unemployed. The implication is that the economic logic equating automation with increased productivity has not been invalidated; its proof has merely been delayed.

But there is more to the productivity conundrum than the 2008 crisis. In the two decades that preceded the crisis, the sector of the US economy that produces internationally tradable goods and services – one-third of overall output – failed to generate any increase in jobs, even though it was growing faster than the non-tradable sector in terms of value added.

Most of the job losses in the tradable sector were in manufacturing industries, especially after the year 2000. Although some of the losses may have resulted from productivity gains from information technology and digitization, many occurred when companies shifted segments of their supply chains to other parts of the global economy, particularly China.

By contrast, the US non-tradable sector – two-thirds of the economy – recorded large increases in employment in the years before 2008. However, these jobs – often in domestic services – usually generated lower value added than the manufacturing jobs that had disappeared. This is partly because the tradable sector was shifting toward employees with high levels of skill and education. In that sense, productivity rose in the tradable sector, although structural shifts in the global economy were surely as important as employees becoming more efficient at doing the same things.

Unfortunately for advanced economies, the gains in per capita value added in the tradable sector were not large enough to overcome the effect of moving labor from manufacturing jobs to non-tradable service jobs (many of which existed only because of credit-fueled domestic demand in the halcyon days before 2008). Hence the muted overall productivity gains.

Meanwhile, as developing economies become richer, they, too, will invest in technology in order to cope with rising labor costs (a trend already evident in China). As a result, the high-water mark for global productivity and GDP growth may have been reached.

The organizing principle of global supply chains for most of the post-war period has been to move production toward low-cost pools of labor, because labor was and is the least mobile of economic factors (labor, capital, and knowledge). That will remain true for high-value-added services that defy automation. But for capital-intensive digital technologies, the organizing principle will change: production will move toward final markets, which will increasingly be found not just in advanced countries, but also in emerging economies as their middle classes expand.

Martin Baily and James Manyika recently pointed out that we have seen this movie before. In the 1980’s, Robert Solow and Stephen Roach separately argued that IT investment was showing no impact on productivity. Then the Internet became generally available, businesses reorganized themselves and their global supply chains, and productivity accelerated.

The dot-com bubble of the late 1990s was a misestimate of the timing, not the magnitude, of the digital revolution. Likewise, Manyika and Baily argue that the much-discussed “Internet of Things” is probably some years away from showing up in aggregate productivity data.

Organizations, businesses, and people all have to adapt to the technologically driven shifts in our economies’ structure. These transitions will be lengthy, rewarding some and forcing difficult adjustments on others, and their productivity effects will not appear in aggregate data for some time. But those who move first are likely to benefit the most.

This article originally appeared on project-syndicate.org.