Artificial Intelligence (AI)

Archive

217 results

![<p>A photo illustration of the Anthropic logo.</p>]() By Michael Froman

By Michael Froman![A large colorful sculpture of the Google logo on display at the AI Impact Summit, in New Delhi, India.]() By Kat Duffy

By Kat Duffy![Two soldiers hold controllers and wear tech head pieces as they operate a first-person-view drone during a live-fire exercise.]() By Michael C. Horowitz and Lauren Kahn

By Michael C. Horowitz and Lauren Kahn![<p>Conduits for fiber to connect superclusters of data centers are under construction during a tour of the OpenAI data center in Abilene, Texas, U.S., September 23, 2025.</p>]() By Shannon K. O'Neil

By Shannon K. O'Neil![<p>People use augmented reality headsets during the World Artificial Intelligence Conference (WAIC) in Shanghai on July 28, 2025. </p>]() By Chris McGuire, Kat Duffy, Vinh Nguyen, Michael C. Horowitz, Adam Segal and Jessica Brandt

By Chris McGuire, Kat Duffy, Vinh Nguyen, Michael C. Horowitz, Adam Segal and Jessica Brandt![<p>Displaced Sudanese flee Zamzam displacement camp in North Darfur, Sudan, after attacks by the Rapid Support Forces, April 15, 2025.</p>]() By James M. Lindsay

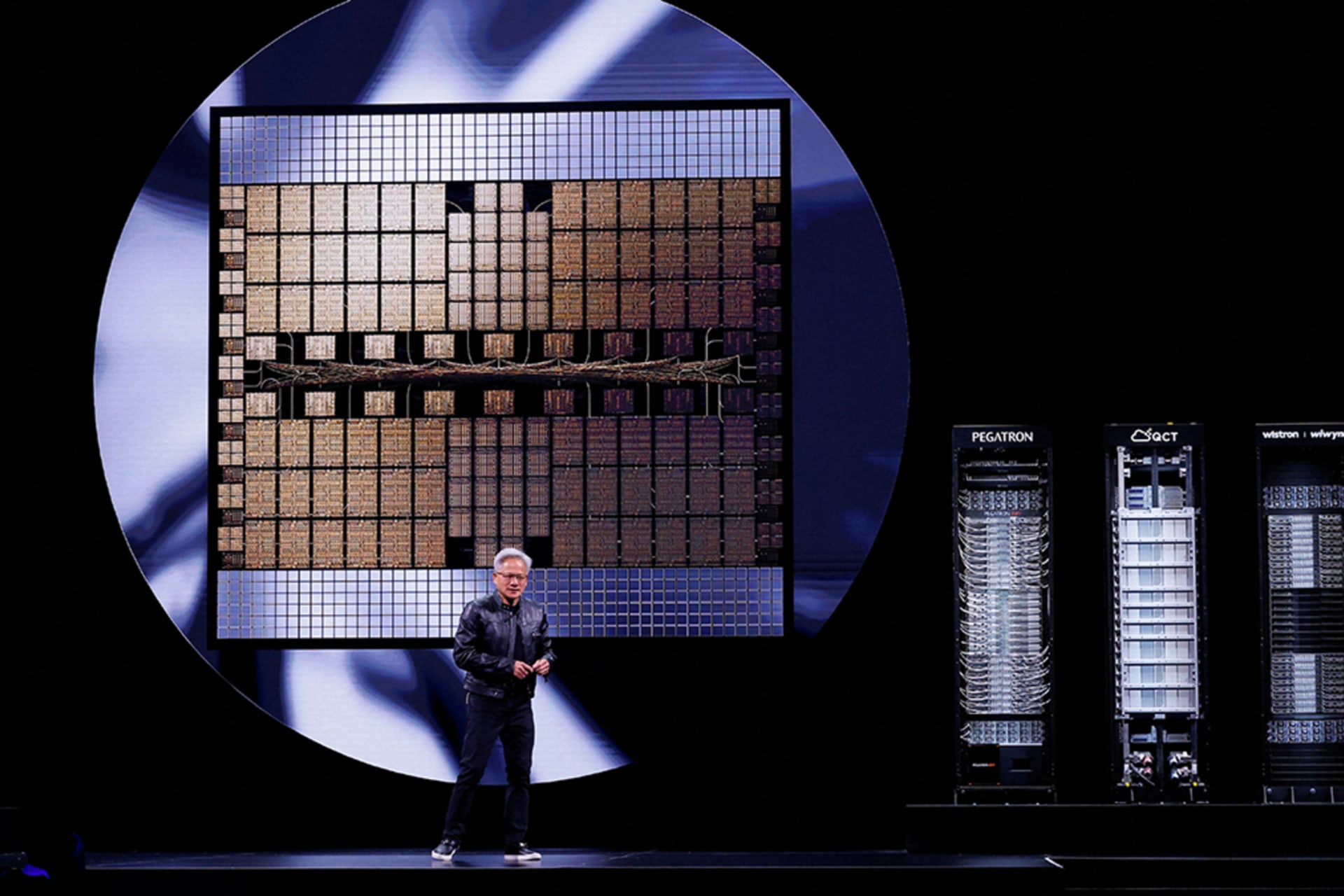

By James M. Lindsay![<p>Nvidia CEO Jensen Huang makes a keynote speech at Computex in Taipei, Taiwan, May 19, 2025.</p>]() By Michael C. Horowitz, Chris McGuire and Zongyuan Zoe Liu

By Michael C. Horowitz, Chris McGuire and Zongyuan Zoe Liu![Gemini image]() By Sebastian Elbaum and Jonathan Panter

By Sebastian Elbaum and Jonathan Panter![<p>A striking port worker stands at the entrance to the Port of New Orleans, Louisiana, on October 2, 2024. About 45,000 dock workers walked out at 36 U.S. ports amid fears of job loss in an AI-driven future.</p>]() By Rebecca Patterson and Ishaan Thakker

By Rebecca Patterson and Ishaan Thakker![<p>First Lt. Maurielle Pankau, a 183rd Air Component Operations Squadron intelligence analyst planner, participates in the Shadow Operations Center-Nellis Experiment 3 at Nellis Air Force Base, Nevada on June 13.</p>]() By Michael C. Horowitz and Radha Iyengar Plumb

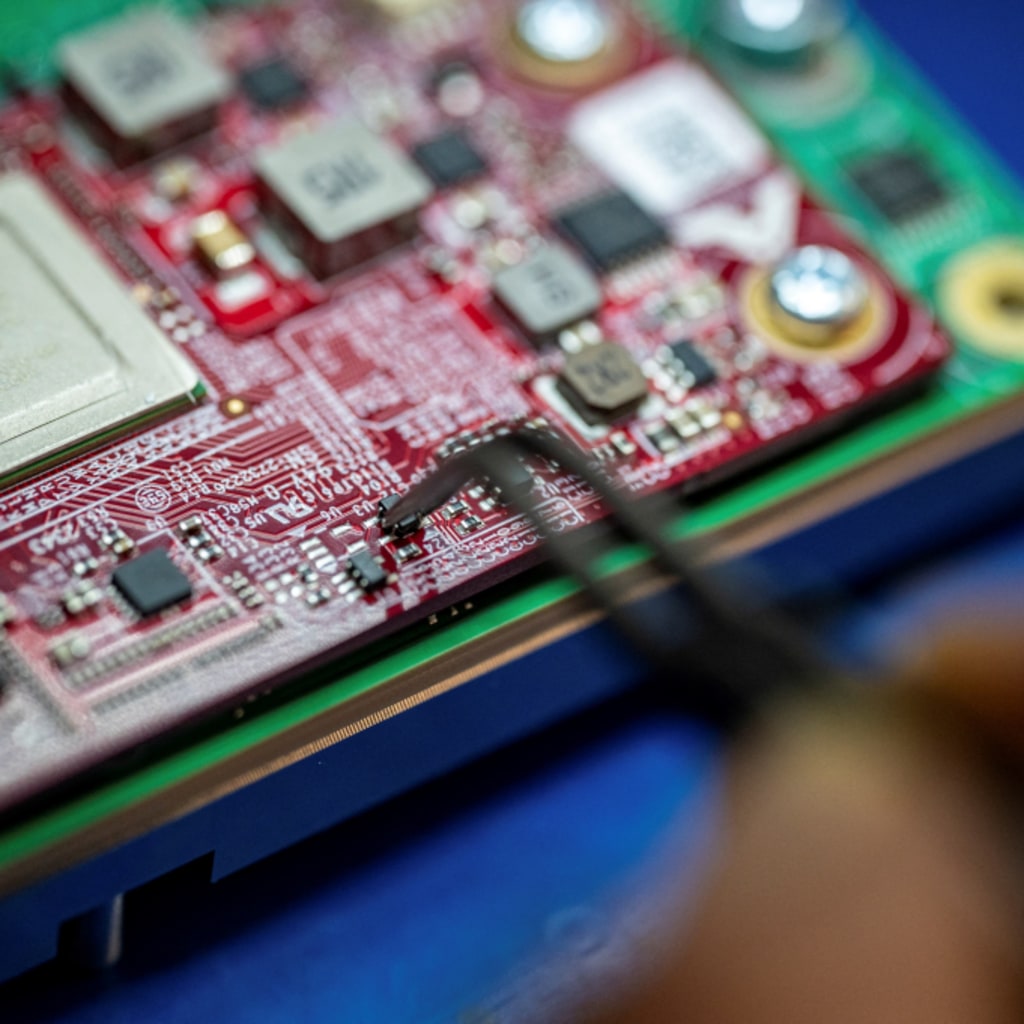

By Michael C. Horowitz and Radha Iyengar Plumb![--]() By Sebastian Elbaum and Maximilian Hippold

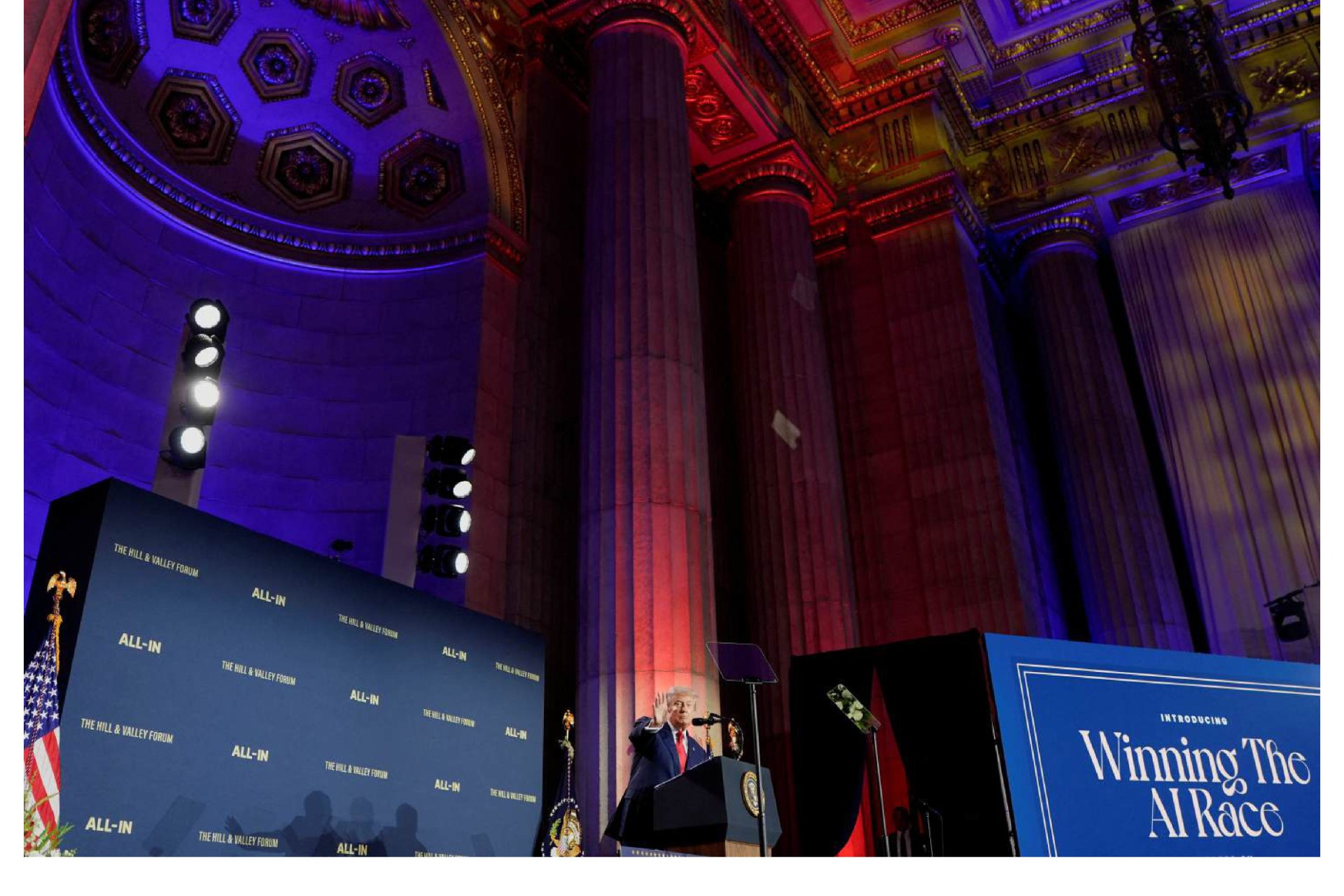

By Sebastian Elbaum and Maximilian Hippold![<p>President Donald Trump delivers remarks on artificial intelligence at the “Winning the AI Race” Summit in Washington D.C., on July 23.</p>]() By Sebastian Mallaby, Jessica Brandt, Michael C. Horowitz, Kat Duffy, Erin D. Dumbacher, Rush Doshi and Jonathan E. Hillman

By Sebastian Mallaby, Jessica Brandt, Michael C. Horowitz, Kat Duffy, Erin D. Dumbacher, Rush Doshi and Jonathan E. Hillman![<p>U.S. President Donald Trump and Crown Prince of Abu Dhabi Sheikh Khaled bin Mohamed bin Zayed Al Nahyan attend a business forum at Qasr Al Watan during the final stop of his Gulf visit, in Abu Dhabi, United Arab Emirates, May 16, 2025. </p>]() By Michael Froman

By Michael Froman