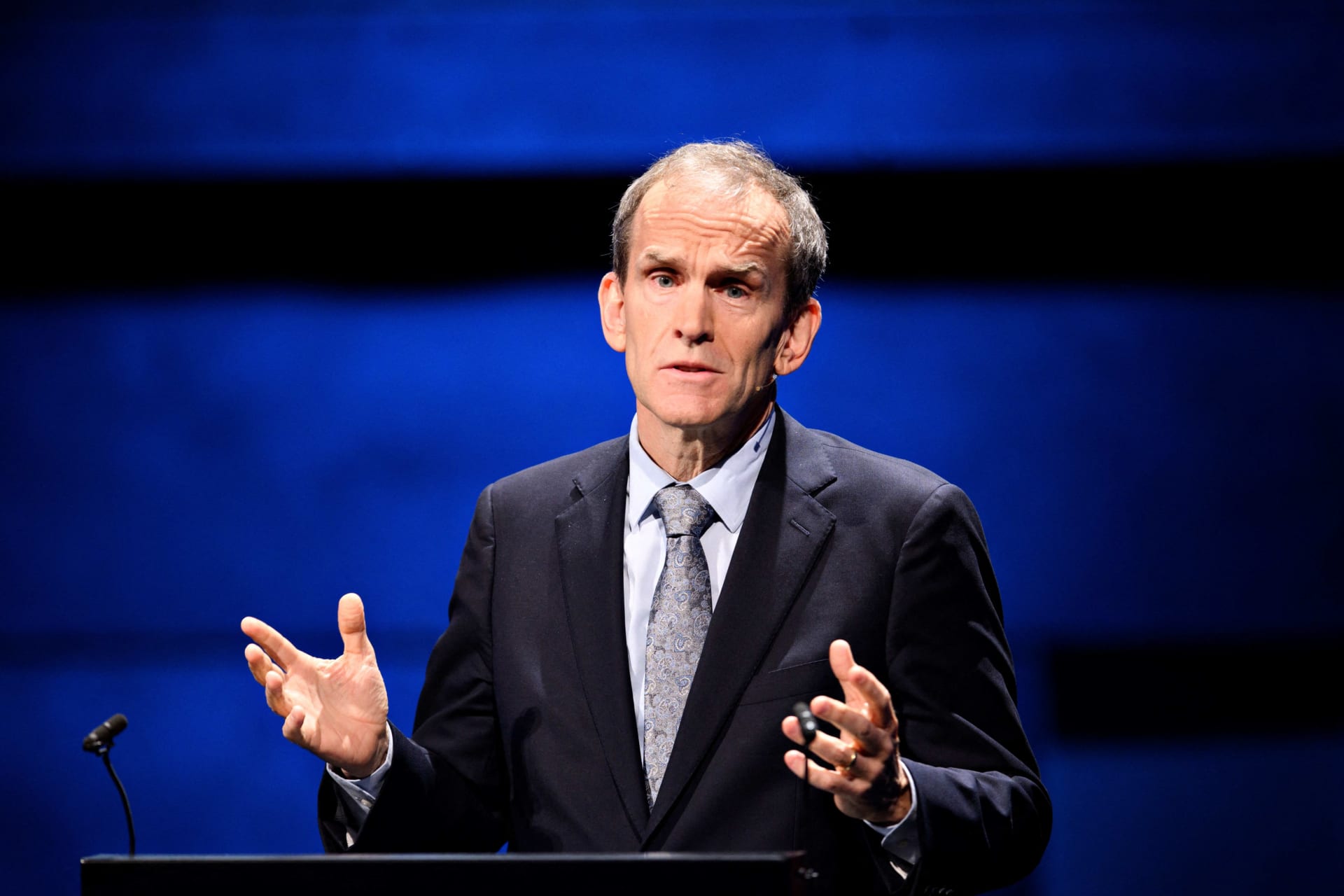

Governing Artificial Intelligence: A Conversation with Kent Walker

Kat Duffy moderated a private dialogue on AI and human rights with Google’s Kent Walker and heads of human rights organizations on the sidelines of UNGA. This follow-up conversation reflects some themes raised in that session.

Kent Walker is the President of Global Affairs at Google and Alphabet and a CFR Life Member. Mr. Walker oversees Google’s work on public policy, legal, regulatory, and compliance issues; content trust and safety; philanthropy; and responsible innovation.

Artificial intelligence’s transformational possibility is currently the focus of conversations at everything from kitchen tables to UN summits. What can be built today with AI to solve one of society’s big challenges, and how can we drive attention and investment towards it?

AI’s much more than a chatbot. It’s a once-in-a-generation technology shift, one that may be on par with electricity as a general-purpose tool that will improve lives across the globe. It has the potential to change the way we do science, and may unlock opportunities across multiple disciplines.

We’re already seeing results: Google’s AI for Social Good project has worked with partners to use AI to help detect diabetes earlier and more affordably, and we’ve enabled earlier and more available flood forecasting. Our Thousand Language Initiative helps preserve languages that might otherwise be in danger of dying out. We’ve integrated AI into our Data Commons initiative to make public data more available and easy to use for civil society groups and worked with the UN Statistics Division to build the UN Data Commons for the Sustainable Development Goals (SDGs), tracking metrics across the seventeen SDGs.

The data underlying large language models (LLMs) raises fundamental questions about accuracy and bias, and whether these models should be accessible, auditable, or transparent. Is it possible to establish meaningful accountability or transparency for LLMs, and if so, what are effective means of achieving that?

Transparency is an important and challenging topic. We’re working to develop better methods of understanding how models operate. As leading models are trained on trillions of data points, reflect complex and evolving math formulas, and often go through adversarial training, it can be hard to attribute a specific output to a particular set of inputs, but it’s an ongoing area of research. When it comes to accountability for AI deployment, we’re navigating evolving questions about intellectual property, attribution, provenance, data quality, and open access versus closed models. We’re also expanding access to our internal risk management and auditing practices through biannual reports under Europe’s Digital Services Act, and making more data available to researchers so that they can evaluate our services.

The market of powerful AI tools is growing exponentially, as is easy, public access to those tools. Although calls for AI governance are increasing, governance will struggle to keep pace with AI’s market and technological evolution. Which elements of AI governance are the most critical to achieve in the immediate term given rapidly-expanding use of these tools?

This is an active discussion around the globe. We’ve been early supporters of new regulatory models for AI. We have also signed on to voluntary commitments and published our own AI principles back in 2018. These efforts underscore the importance of our long-standing commitment to human rights.

The Organization for Economic Cooperation and Development (OECD) has been working in this area for years, the Group of Seven’s Hiroshima process has taken a leading role in discussing global principles, the United States has laid out its own frameworks, and Europe is progressing its AI Act. Various countries and states are looking to develop their own approaches—ideally harmonized across borders.

In addition to new legal frameworks, we’ll need new social norms, which establish important benchmarks and clarify guardrails. For example, genetics researchers established a set of norms in the 1980s that have lasting impact today, setting a framework around genetic experiments involving people. A similar model for AI research could help steer development. I hope that democratic nations will take the lead in aligning around some basic norms that can help set parameters for the future.

Large digital platforms will be a key vector for disseminating AI-generated content. How can existing standards and norms in platform governance be leveraged to mitigate the spread of harmful AI-generated content, and how should they be expanded to address that threat?

We’ve all learned a lot over the last twenty years; the rise of generative AI has sparked much more sophisticated conversations about trust and safety than we had at the outset of the internet. While we may see more misleading AI-generated content, AI will make content moderation faster, broader, and more consistent, ultimately helping us to stay ahead of the game.

We need to work across the sector and with governments to get this right. At the end of the day, international human rights standards must continue to guide our thinking about how to responsibly address these challenges.

When it comes to multi-stakeholder bodies, we can draw on success stories like the Global Network Initiative, which brings together governments, companies, and civil society groups to coordinate on preventing censorship and protecting internet privacy rights. We can build off these efforts as we develop ways of sharing best practices in AI governance. For example, Google, along with Anthropic, Microsoft, and OpenAI, has already launched the new Frontier Model Forum to facilitate work with other advanced AI labs on these issues.

New regulatory requirements will also promote knowledge sharing. For example, the Digital Services Act requires platforms to offer information in more standardized frameworks and formats, making it easier for researchers and civil society to assess companies’ approaches.

Artificial intelligence is a multi-use technology, but that does not necessarily mean it should be used as a general purpose technology. What potential uses of AI most concern you and can those be constrained or prevented?

Many AI systems are multi-use. An image-recognition system used for unlocking your phone could also benefit a repressive regime that wants to identify protesters. We’ve worked hard to take a responsible approach when releasing our research. For example, we’ve held back releasing the full results of some of our research when we felt that it could be misused.

I also believe that—as with electricity—we won’t end up with one-size-fits-all rules or a single body that governs all AI, if only because it is such a multi-dimensional technology. Rather, AI will be built and governed by a wide range of different entities and in accord with distinct standards, depending on the purpose and impact of a particular application. The risks and benefits of AI differ across banking, healthcare, and transportation. We need a hub-and-spoke model that allows sectoral regulators to assess the impacts of AI in those areas, while a central organization develops expertise and state capacity. We invest a lot in understanding the potential misuses of AI, precisely so that we can try to get ahead of potential abuse cases.

To close us out, what is the question you most wish I had posed, and what is your answer to that question?

One question might be how is AI helping us today in ways we might be overlooking? If you’ve used Google Search, Maps, Translate, or Gmail, AI has been helping you for a dozen years. There’s been so much focus on AI chatbots that we risk missing that AI is already helping us revolutionize the way we do science. By way of example, our team at DeepMind used AI to predict the shapes of 200 million proteins, nearly all the proteins known to science, an advance that’s now being used by more than a million medical researchers around the world. Scientific progress drives economic productivity and increased living standards, one of our key social challenges over the past couple of decades.