Year in Review: Tech Companies Grapple with Disinformation

2017 was the year that tech companies faced a difficult reckoning over their role in spreading online disinformation.

By experts and staff

- Published

- Guest Blogger for Net Politics

Lorand Laskai is a research assistant in the Asia studies program at the Council on Foreign Relations. You can follow him @lorandlaskai.

Admitting you have a problem in four acts:

Act I: Denying the problem exists

If 2016 was the year of Russian information operations, fake news or “post-truth,” then 2017 was the year that tech companies faced a difficult reckoning over their role in spreading online disinformation. Silicon Valley’s absolutist embrace of free speech, conviction that connection is a social good, and deep-seated laissez-faire attitudes toward regulation have long informed social media companies’ approach to policing their platforms. Thus, even after a year of alt-right trolls running amok on Twitter, Russians agents propagating divisive political messages online, and one small town in Macedonia turning pro-Trump fake news into a prosperous cottage industry—social media companies began 2017 struggling to acknowledge the scale of their disinformation problem. Last November, Mark Zuckerberg captured the sentiment of the industry writ large, when he called the notion that fake news on Facebook influenced the election “a pretty crazy idea.”

Act II: Damage control

On January 6, the intelligence community released a report that asserted that Moscow had used third-party intermediaries and paid social media trolls to help President-elect Trump’s election chances. Whether or not social media companies thought disinformation was a big deal, others were clearly looking to them for action. In January, Google said that it banned 340 fake news sites from using Google Ads to monetize web traffic. In April, Facebook’s security team dropped the company’s first white paper on information operations, shedding light on how Facebook deals with state-backed actors peddling disinformation. By May, red “disputed” tags began appearing on Facebook users’ feeds—an effort by the company to flag bogus content.

In Europe, the social media giants geared up to fight fake news and propaganda in the run-up to elections in France, Germany, and the United Kingdom. Brussels and European governments have long been skeptical of big tech’s hands-off approach to online speech and have threatened steep fines to companies that fail to take down hate speech and disinformation online. By many accounts, the vigilance of social media companies during the French election made a difference. And yet, the companies continued to discount the role of disinformation in the 2016 U.S. election. Throughout the summer, Facebook, on multiple occasions, denied that Russian agents bought political ads on its platform, and Twitter issued a press release on combating bots and disinformation that was panned for not taking the problem seriously.

Act III: OK, maybe we have a problem

By the fall, it was increasingly hard to deny that the problem was much larger than the companies first let on. A report from Oxford Internet Institute found that people shared almost as much fake news as they did real news on Twitter in the lead up to the 2016 election. In a post on September 6, Facebook’s Chief Security Officer Alex Stamos walked back the company’s previous statements and revealed that Russia-linked troll farms had purchased around $100,000 in ads on the social media platform. Weeks later, Zuckerberg did some walking back of his own, apologizing for dismissing the influence of fake news. “Calling that crazy was dismissive and I regret it,” Zuckerberg wrote in a post.

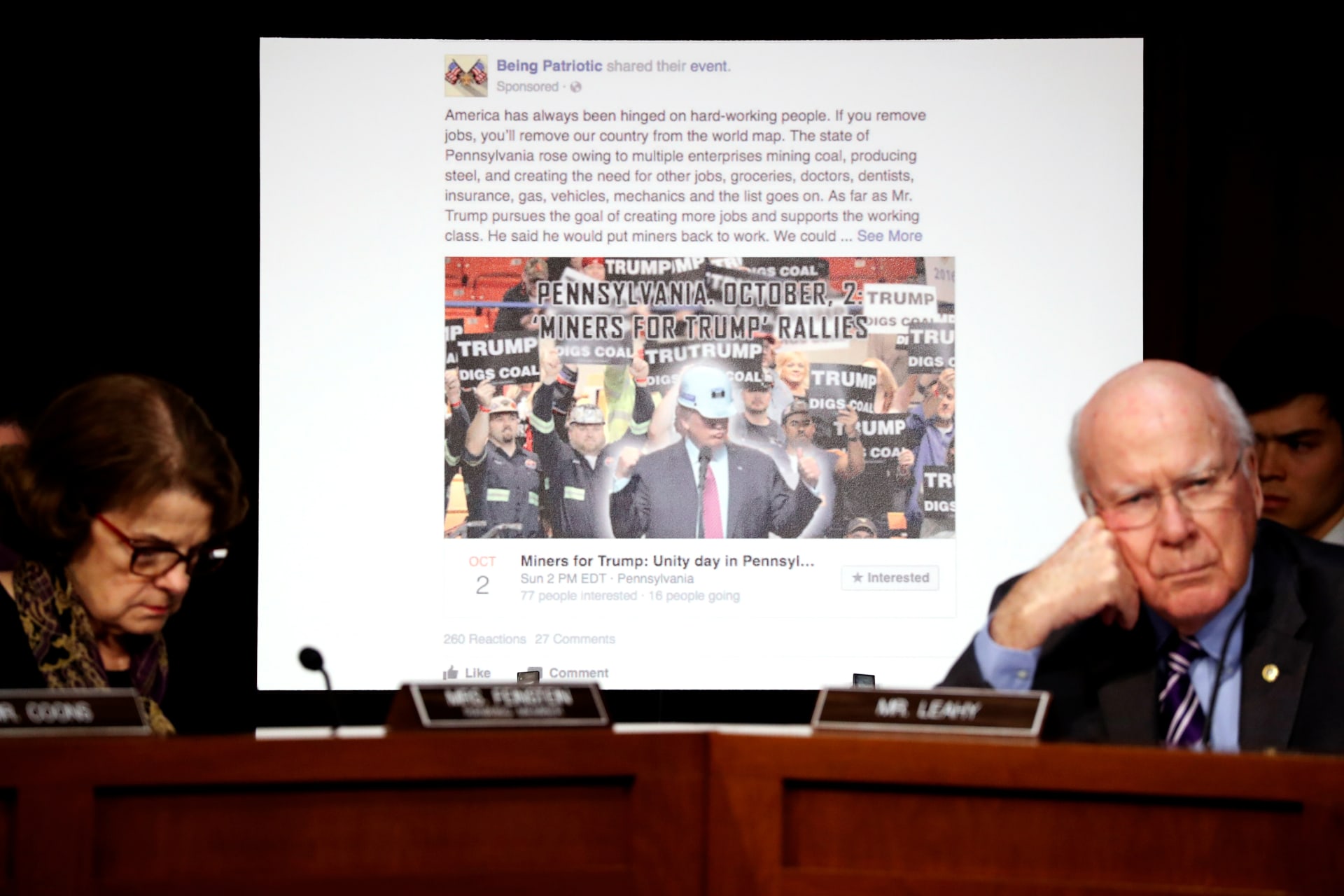

Despite efforts to get ahead of the brewing controversy over disinformation, the response of social media companies was hobbled by their initial refusal to address the scope of the problem. In October, Facebook acknowledged that at least 126 million Americans saw political ads bought by Russian-backed agents. On October 30, contrite executives from Facebook, Twitter, and Google appeared before frustrated lawmakers, where they signaled that they would do more to combat disinformation. The revelation of Russia-linked campaign ads also means that social media companies will need to grapple with potential congressional action and further regulation from the Federal Election Commission on online political disclosures.

Act IV: Acceptance is only the start

While much of 2017 was spent debating the scope of tech companies’ disinformation problem, how tech companies should respond to disinformation remains at issue. Despite efforts to purge fake content, Google News has been repeatedly duped this year into promoting conspiracies and fake news after mass shootings. In December, Facebook stopped flagging disputed article after finding the system was ineffective, and that it often entrenched people’s beliefs rather than informing them. In addition, platforms that have received less attention, like Instagram, still provide considerable leeway for Russian troll-farms to spread propaganda. These issues all but ensure that disinformation will remain a hot topic in 2018. As for residents of the Macedonian town that made a fortune of fake news during the 2016 election? They’re busy preparing for the 2020 election.