The Future of AI, Ethics, and Defense

Event date

Speakers discuss the intersection of technology, defense, and ethics, and the geopolitical competition for the future of innovation.

The Malcolm and Carolyn Wiener Annual Lecture on Science and Technology addresses issues at the intersection of science, technology, and foreign policy. It has been endowed in perpetuity through a gift from CFR members Malcolm and Carolyn Wiener.

KORNBLUH: Thank you so much. Welcome to today’s Council on Foreign Relations virtual meeting on the future of AI, ethics, and defense. I’m Karen Kornbluh, director of the Digital Innovation and Democracy Initiative, former U.S. ambassador to the OECD and former senior fellow for digital policy here at CFR. Today’s meeting serves as the Malcolm and Carolyn Wiener Annual Lecture on Science and Technology. The lectureship addresses issues at the intersection of science, technology, and foreign policy, and is generally, generously, excuse me, endowed in perpetuity, through a gift from CFR members Malcolm and Carolyn Wiener, who are joining us today. We have more than 500 people registered for this virtual meeting and we’ll do our very best to get to as many questions as possible during the question and answer period.

Taking advantage of the enormous opportunities of the AI revolution, while also mitigating its risks, requires new governance institutions and mechanisms. But the issue has barely come up in the election and it’s not clear whether we as a country are even at this challenge. We’re in the midst of a governance crisis in which trust in our institutions, our governments and each other is at an all-time low. This is in part of course a result of our failure to respond to the last technological revolution. Fortunately, CFR has assembled the three leaders at the very forefront of developing strategies for this critical moment. It is my great honor to introduce our distinguished panel. Ash Carter directs Harvard’s Belfer Center for Science and International Affairs, and is a former U.S. secretary of defense where he’s created bridges between DOD and Silicon Valley. Reid Hoffman is partner at Greylock Partners and cofounder of LinkedIn, and was a founding member of the Defense Innovation Board. Fei-Fei Li is the Sequoia Capital professor in computer science at Stanford University and codirector of Stanford’s Institute for Human Centered Artificial Intelligence. The short discussion will be focused not on the philosophical, but the practical. It’ll have three parts. What actions would allow the U.S. to benefit the most from AI? What can we do to mitigate its risks? And what is the best way to get started on these enormous tasks? So part one opportunities, and I’d like to start with you, Secretary. A big question for many in the audience is where do we stand vis-a-vis China, in AI especially with regards to our military preparedness? And what should we be doing to ensure our ongoing military superiority?

CARTER: Well, I’m pretty confident in our current strength vis-a-vis China. Let me say just at the very beginning, I don’t think a war with China is likely still less inevitable and is very much something to be avoided. However, in the end the answer to your question, it’ll be a long time before China has the comprehensive military power that the United States has, Karen. I mean, for starters, just a simple metric, we spend a lot more than they do every year, that obviously doesn’t mean everything, but it means something. Secondly, we have spent a lot more than they have for decades, which means our accumulated stock of equipment and technology is much greater than theirs. Third, our officer core has recent operational experience, which I think is significant. And then fourth, if we don’t mistreat them, all the allies are ours and China has none. So those are a lot of really very significant strengths. Now, at the same time, we all know China, China’s economy, unfortunately, not unfortunately, but certainly unfortunately in comparison to us, grew, last year, this year, ours shrank this year because of the mismanagement of the COVID response. But so they’re, they’re coming up.

And I think the real thing, Karen, and I’m sure you’ve noticed as well, is that whereas than, say twenty years ago, I stopped believing this ten years ago, but twenty years ago, we could have continued to hope that China would turn out differently, turned out more like us. It hasn’t. China’s turned out like China, a communist dictatorship. And that, that is going to be a challenge for us. It requires a different kind of playbook from the one we had in the Cold War. And my first job in the Pentagon was for Caspar Weinberger, the Reagan administration, so I know the Cold War well. That was entirely different. That was a, that was a geostrategic opponent with whom we did not trade. We are, we do trade with China, we’re going to continue to trade with China. So somehow there’s going to be a mixture of segregation and integration there. And I think navigating that is the key to geostrategic policy, with respect to China with militarily and technologically.

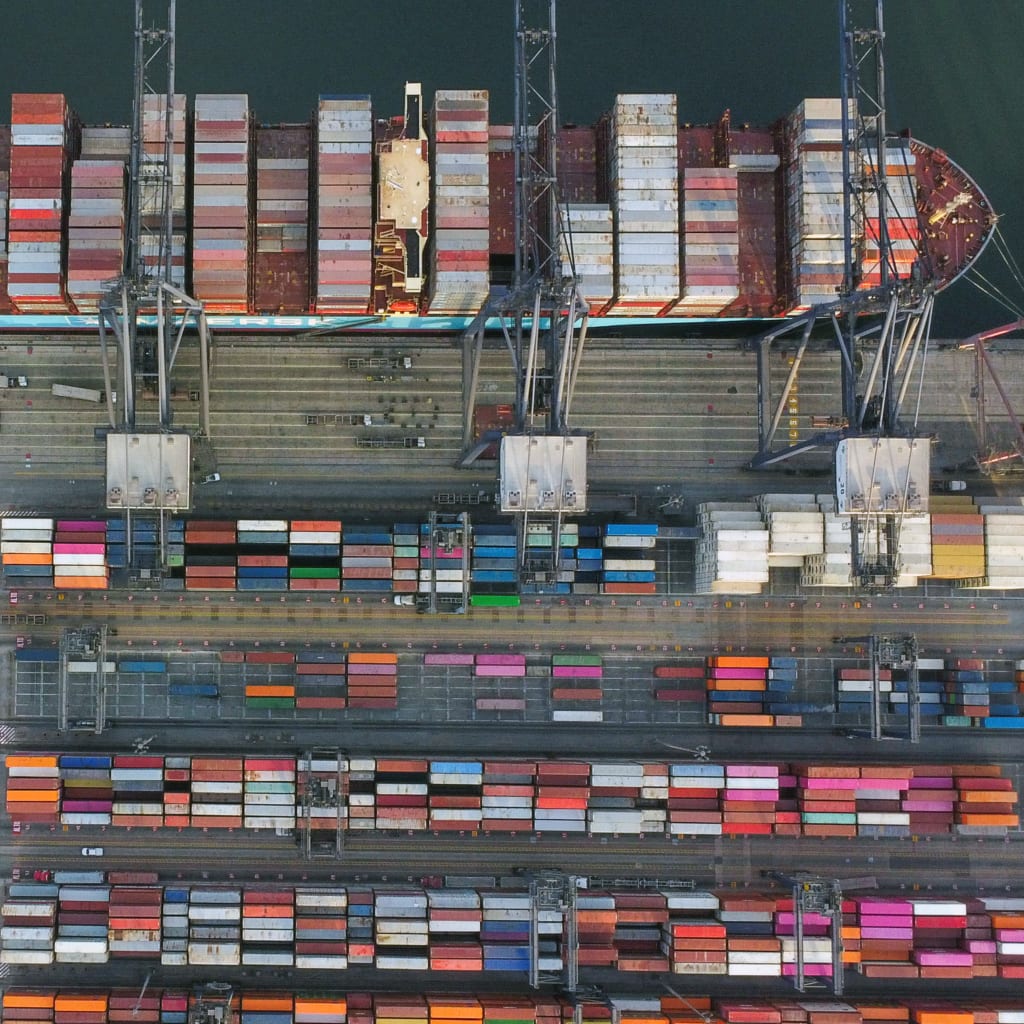

KORNBLUH: Great. I’m just going to turn to the domestic civilian. Reid you said that AI is going to have the kind of economic and cultural impact that electrification, railroads and other technologies had as the second industrial revolution did. You know, we’re here at the Council on Foreign Relations, how can these benefits be realized by other countries which may be behind the U.S. and behind China in the AI race? Or are we going to find ourselves in a new era of what some have called data colonization?

HOFFMAN: Well, I think there’ll be a bunch of different ways. You know, it starts with the fact that there are a number of institutions, not just the Stanford Human Centered AI, which [INAUDIBLE], but also Open AI, which I’m on the board of. I’m also the chair of the advisory board for Stanford HAI, that are actually trying to make sure that these benefits spread, right, that there’s a kind of a collective view of what kind of governance and ethics might look like, of what kinds of technologies might be available. But, you know, that being said, there’s a couple of things that obviously, just as kind of classically, the more technologically advanced nations, more wealthy nations will have, you know, kind of earlier advantages on them. Some of it is the pure build of the technology, some of it is also of course the modern AI is in depth compute, right? So you need a large computing centers. Now, you know, a lot of the American tech giants, you know Microsoft, and Google, and Amazon, and Apple have all are putting, well especially the first three, are putting significant data centers in various places around the world that you can do a lot of compute. And so I think there’s ways that will happen. Now, as a kind of a, as an end gesture to it, if you look at, for example, what Open AI is doing, it is released, currently released DVD Three, which is a very interesting large scale language model. It is essentially opened its API’s to developers, and they’re not just developers in the U.S., but to say, hey, if you want to go in and bring datasets and also try to build applications on top of this, this is something that you can do. And so I think that’s one of the ways that the rest of the world will get included.

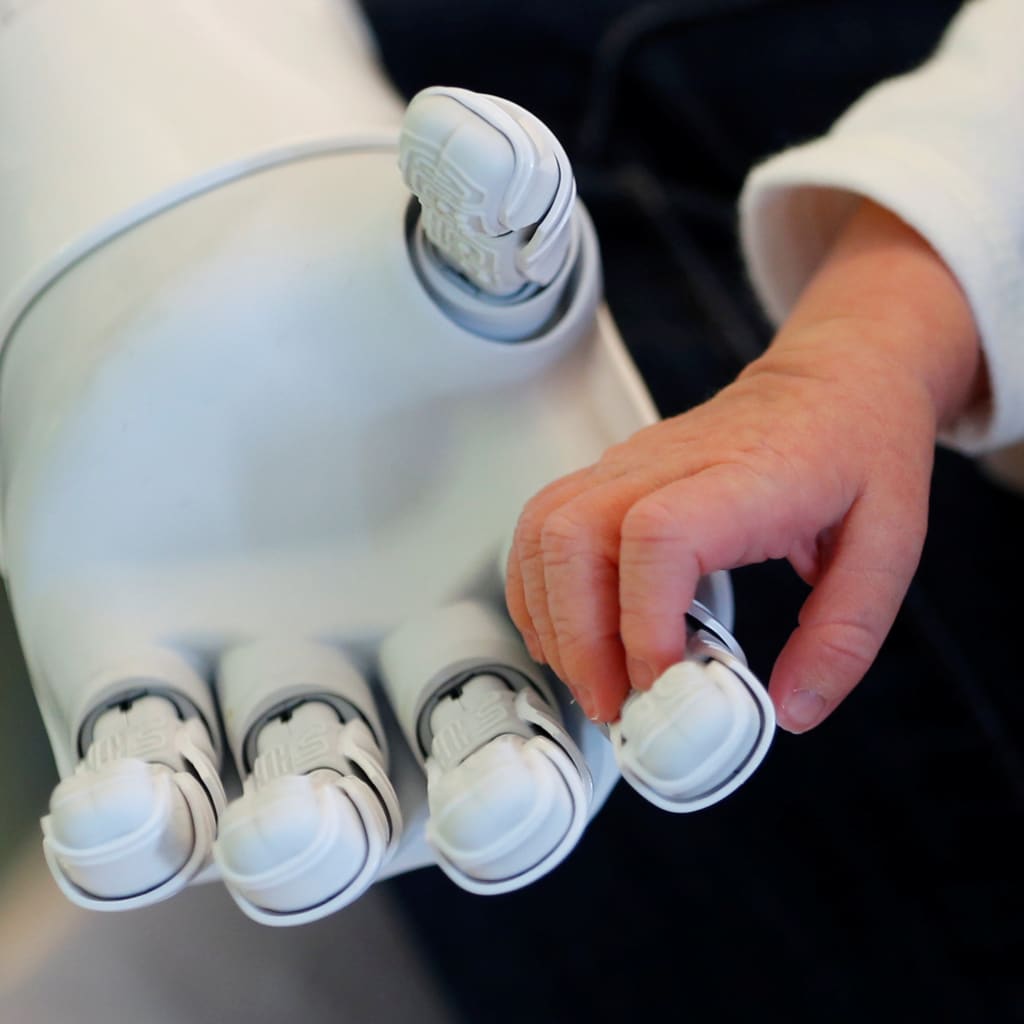

But you know, it’s in this proxy, I mean, I do think very strongly that the benefits of what the AI revolution will revolutionize all industries, will revolutionize, you know, kind of way that content is discovered and produced but also like robotics, like you want middle class jobs and manufacturing jobs to come back to the U.S.. I think it’ll be robotics that will do that. I think similarly, when you kind of get to these other countries, it’ll be the okay let’s make sure they’re included, right. It’s kind of like, well, we have a mobile revolution with smartphones and so forth. You know, obviously, the manufacturing tends to be in a few countries, the OSs tend to be in a few countries, most app development, not all, is within a few companies. But other app development also happens within other countries. And I think, but yet the whole world does benefit from the smartphone revolution. So that’s, I think, the angle and the way of thinking about it.

KORNBLUH: That’s great. And then just to round out this part, where we set the table, Fei-Fei, please tell us what we can do to usher in what you’ve called the benevolent machine that it’s going to make a difference in some of our social challenges. And I think you focus specifically on our healthcare system, which is a good place to start right about now.

LI: Yeah, thank you, Karen. So, you know, here at Stanford Human Centered AI Institute and for years, my colleagues and I really truly believe there’s no independent machine values, and machine values are human values. And under that premise, it really speaks to the core of what we hope AI could bring to humanity, to this country as well as globally, is unleash the positive potentials and benevolence of the technology and mitigate the risks, the dangers, and so on. You’re right, healthcare is dear and near to my heart and on everybody’s mind these days. So I’m seeing a kind of a watershed moment that the healthcare industry is looking at the potential that machine learning, data analytics, and AI can help. We can even just take COVID as an example that we could have imagined or can imagine that from contact tracing to drug discovery to vaccine discovery to health care delivery of patients already in ICU, or senior chronic care in homes, all these scenarios, and of course medical imaging, and pathology, and all that, all these scenarios now are potentially data driven, and has a lot of room for a technology like AI to make a huge difference. And you know, personally at Stanford, we’re working with doctors right now to help with improving healthcare delivery using smart sensors, and, of course, respecting ethical privacy issues. But these sensors can help patients and doctors to really real time catch health critical moments, and hope improving treatment outcomes.

KORNBLUH: That’s, thank you so much for being so specific. I think it’s very helpful. So just to talk about the risks, because as Reid and Fei-Fei have both talked about, there’s a danger that we won’t realize some of the potential of AI because people are very concerned about some of the downside risks. And as you’ve talked specifically about the blackbox, in which AI makes decisions, you spoke about it first in terms of lethal force, but in general, I think you’re talking about the broader issue of accountability. Can you talk about that? And also, again, here we are at CFR, if we wanted to create global norms around that, so not just constraining ourselves, do we have the leverage to do that?

CARTER: Alright let’s start, let’s start with this question of accountability and transparency because I think, I think that’s the critical issue for the deployment of artificial intelligence. Fei-Fei talked about health care. That’s a grave sensitive matter. Defense is a grave sensitive matter. Law enforcement is a grave sensitive matter. Now, so if you’re going to take AI, and you’re going to apply it to grave sensitive matters, it can’t be like, like advertising. Where if you get it wrong, well, so what. This is serious business in my world, the world I inhabited as Secretary of Defense, it was lethal business. And I said, actually, I wasn’t Secretary of Defense, I was Deputy Secretary of Defense back, way back in 2012. This question was first asked when I was the COO of the Defense Department. What about AI and lethal autonomous weapons? And I said, we’re not going to have totally autonomous lethal weapons. There has to be a human being involved in the decision making at some stage. Now, Karen, where things get deep with AI is when you say, well what do you mean by having a human being actually involved? I mean, you can’t be like a chip in a circuit diagram. It’s not man in the loop, in that sense, it’s a different kind of responsibility. And then you have to also reckon with the fact that AI algorithms are obscure. It’s very hard to de-convolve how an inference was made in many cases. Some of them are proprietary, although we can’t tolerate that at the Defense Department, I don’t think most customers should accept black boxes from vendors, they should require some level of transparency. Then they’re the data. So that’s the algorithms, then you go to the data sets, how good is the data? Is this bias data? Is this outdated data? Are you just going to be recapitulating baloney? Who labeled the data in the first place? Who said that picture was a cat? Who said that picture was a person? And so you have to really work at this.

However, I believe that these are tractable problems. There are some broadly similar things, Karen, in for example flight software. Everybody’s familiar with the 737 Max, if you read that drama, that is a case of again very trusted, very complicated, layer after layer kind of software. It’s not AI, but making a big mistake. And yet you can go back and figure out what went wrong. And in the same way, I think in defense where we deploy AI or in commerce, when I talk to companies and advise them on buying AI, I tell them, here’s some practical things to ask about the algorithm, to ask about the data set, ask it and testing. Did you have dueling teams testing this? Have you tried it against this scenario or that scenario? So you get to a point, Karen, where you know, you don’t completely get off the hook. So if I’m Secretary of Defense and we kill women and children, I feel responsible. And I can’t go out to the press and say the machine did it. But I think you can get to the point where a reasonable person would say, you made a reasonable effort with AI to make sure that the moral thing was done. That isn’t always going to work. But you made a reasonable effort and a judge, a jury, a press conference, a political leader will accept some level of reasonable explanation. I think we can give that even despite the complexity of the AI.

KORNBLUH: So if we want to add to the accountability question, the issue of explainability. I know Fei-Fei, you’ve looked at that and talked about that. It’s so hard to think about how a human can understand and be explained to about how an algorithm has made its decision. But can you talk about what you all are doing to work on that?

LI: Yeah, Karen, thank you so much. And Secretary Carter, your level of understanding of AI is so deep and impressive. And I think this is a really important element and aspect of AI. So look, let’s just be first clear that humanity has been innovating machines and to be honest, depending on the audience, not every machines we’re using is explainable. I was just thinking, do I know exactly how my dryer is working? I don’t think I know exactly how my dryer works, or my washing machine. But there’s something deeper about that is, first of all, there are experts who do their regulatory agencies and offices that does and help to put guard rails and inspect, and there is enough public trust, when you buy a washing machine and a dryer. So that this particular piece of technology is a trustworthy piece of technology. And I think AI is so early in its entering the world of the public that we need to start building that framework of trust into the design, development and deployment of AI ASAP. I hope it’s not too late and I don’t believe it’s too late. But as my colleagues at HAI keep saying, bring trustworthiness and ethics; bake that into the design process and all the way through the data pipeline, the algorithm pipeline, the decision making pipeline, the business deployment pipeline, and part of that is explainability. I think mathematically depending on which kind of algorithm, there are things we can explain. There are things that can only be explained by a smaller group of experts or potentially regulatory bodies. So we have to take this with a nuanced approach, and also layered approach. And one last thing, I think I hear from Secretary Carter that I think is so important, is the multi stakeholder approach, is that whether it’s the developers, the business, the policymakers, the consumers, the civil society organizations, the academia, we have to come together and build that trustworthy framework for AI.

KORNBLUH: So you jumped on what my question was going to be for Reid. It’s a perfect segue, because I know that the impetus for Stanford HAI was in part to bring in a variety of stakeholders, because, as you said, part of people’s nervousness was that corporations were in the driver’s seat. And you thought that there should be other stakeholders more obviously involved from the very beginning. And I’m just wondering, because Secretary Carter and Fei-Fei Li both talked about, they mentioned in passing regulation, and courts, and inspectors and so on. So I’m wondering how do we think about, especially because you’ve been so involved in the recent history of the internet, which has also had this multi stakeholder model, how do you, where do you see the role of the multi stakeholder model versus laws and regulations, whether it’s privacy that’s across sectors or I heard Michael Kratzer talking recently that the administration is looking at maybe sectoral regulation. Is there a role, I guess just generally, for law and regulation as well as ethics and multi stakeholderism?

HOFFMAN: Well, there’s obviously good roles for all of them, good roles for multi sectoral, they call the good role for even laws and regulations. You have to be careful about overly, one of the things that happens too often, what kind of regulatory tools you’re in shrining the past against the future. And you have to think about if really, if cost and impact is extremely high, you can have a systemic, like, for example, you know, what Secretary Carter was talking about outcomes or these kinds of things that ends up having a very strong, you know, like, okay, let’s try to make sure that we don’t make mistakes there. However, most often, one of the question is the technological adaptation in the future can be actually an important part of the solution, like we steer towards utopia versus dystopia, and that dynamic adaptation a better outcomes in the future, you want to make sure that they happen and that they happen the right way. And so you want to avoid kind of serious systemic failures. That’s part of where you have some regulation and kind of transparency. But the mode of how you create regulation is really interesting here. Because if you say, well, actually, in fact, the most interesting tech is still to come in the next ten years, whether that’s for our social outcomes, whether that’s for our geopolitical position, whether or not that’s for how our industries are amplified, or what they do, right, then you really want to make sure that you’re developing those technologies, and doing it at a quick clip. And by the way, privacy is a perfect example of where you can be careful with this. Because you say, well look I think there are these really important parts about privacy, but when you get to the pandemic, you say, well actually, in fact, you know, aggregating data in a pandemic in order to understand a set of things around therapeutics, a set of things around vaccines, a set of things around, you know, kind of the actual medical stuff, is super important. Contact tracing, which is usually counter argued on a privacy basis, you know, when you look at the most successful responses to the pandemic, like Taiwan, is an extremely intensive contact tracing regime together with quarantine from that, which you’d say, well, that’s some abrogation of privacy. But you say, well, but that’s important, because, you know, you basically, like they never had to do a shutdown, they don’t, and they have far fewer cases, far fewer fatalities than the disastrous handling that we’ve had here in the US, where we’re five times, I think, the fatalities of the global average. Right? So it’s just like okay, you know, despite all our technological leads, and all of our, you know, like our leads, and generating using science and everything else, to generate vaccines, our mishandling of that. So you have to, you have to look at this the right way. And that’s the benefit of the multi stakeholder model because you’re saying, look we’re trying to adapt to positive outcomes as well as trying to structure against negative ones.

Now, let me use a kind of a another example of this, is like you say well in AI, you know, we clearly have a problem with AI in criminal justice stuff today, like the parole recommendation programs tend to just generate over the current set of data that use, which tends to mean that minority communities or other communities that are heavily policed, and there’s a lot of data, tend to degenerate, you know, algorithmic outcomes, that reinforce bias, and that’s a serious problem. The benefit is, if you actually in fact then start using AI, and validation of tool sets to make sure that you’re not factoring in essentially racial injustice, and you’ve retooled them, then you can actually make everything a lot more just, right, because once you actually got a whole bunch of eyes, cross check, validating data sets, new technologies for explainability of these complex models which Secretary Carter was talking about, you can then begin to say, okay well, now we actually, in fact, are beginning to apply something that doesn’t give, you know, kind of the bias that some judges could give, which could be a racial bias or economics bias or something else, and then that can then be kind of weighed against some. And so given all of that, I think the important thing is to say, identify when you’re going to do regulation, identify the things that you think are this specific, massively bad thing, systemic failure, lots of bad impact. Okay let’s be very careful when we are doing that, and on the rest of it, let’s use more multi stakeholder or more, kind of, transparency and dialogue, and iteration mechanisms, so that we can improve the technology to the better futures that we see.

KORNBLUH: Wow, there’s so much there. I would love to get into more of that. We only have a little bit of time before we turn it to the rest of the audience. So I’m going to turn to priorities. We have this election coming up and I want to ask each of you, once the election is over, what should the president, whoever he is, because it’s going to be a he either way, see as his role in this aspect, this area and what should he do first? So what is his role? And what should he do first to start putting us on what you feel is the right path? And why don’t I start with Fei-Fei this time. You have an idea specifically for a national research cloud. Can you tell us what that is and why it’s important, and what do we need to do?

LI: Yeah, thank you. And I think one of the biggest priority in my mind as a proud American scientist, and taking so much pride of what America has done to lead the innovation of the world in the past decades, is to ensure our innovation and competitive advantage through that kind of incredible work we have given to the world. And this was, to a large extent, the result of a very highly productive interplay and integration of federal governments, research universities, and private sectors over the past decades and decades of innovation. And, and I think, in the AI era, this beautiful but fragile ecosystem is a bit of a risk, to be honest, because the resource right now of AI, as Reid said, it’s in depth compute and also massive data, is now more and more just a concentrated in private sectors in the in the tech sectors, which is innovating rapidly and it’s wonderful, as long as we mitigate the risk. But it’s also creating a talent drain as well as an innovation vacuum more and more in the public sector, especially public sector where research and education happens. And innovation is, in general, for public good, and especially in our world class universities and colleges. So if you look at the data, the demand for AI, trained AI and CS talents are just soaring. But now you’re seeing academia where talents are being drained, as well as you know, the siloes data and computing industry, which is also drying the academia from innovation. So that’s really the birth of the idea of the national research cloud, spearheaded by Stanford HAI but with many University and industry partners, that we are asking Congress and Senate to pass a piece of legislation that would form a task force to seriously look at how we can build, you know, partner with industry, academia, and federal government to create this national compute cloud, to increase dramatically the compute data resource for public research for universities and academia. And this way our hope is that we can really invest now and for the future, to create the talents we need and ensure the innovations our country continues to the need in case of AI.

KORNBLUH: And Reid, you advise entrepreneurs, you invest in entrepreneurs, what, if anything, can the government do to help them address some of the critical social challenges that you feel that they can help address with AI? Is there anything the government can do? Should it just stay out of the way? Or can it help create some kind of environment that will help these entrepreneurs?

HOFFMAN: Well, look, it’s really helpful to actually have a whole set of things like for example, things like HAI, one of the things Fei-Fei hasn’t had a chance to talk about yet is that they’re doing innovation on kind of how do you do the ethical cross check? How do you figure out what the technology is and what questions, but also what kind of tools do you need in order to do that? And so you know supporting university efforts like Stanford’s and others would be, I think, really key for something along the social particular thing that the government could do. I think, in terms of the raw entrepreneurial part of it, that’s kind of go back to the American dream, which has been, you know, kind of, you know, broadly more squished in the last four years. Which is like, okay, here’s a set of tools, we bring in very smart people, like, you know, Fei-Fei was mentioning that we have a paucity of talent here. You know, the American superpower over the entire history of America is immigration, like the thing that we do better than everyone else. So go get some of those great people and have them come in and help staff up and help us, right, that would be a very pro entrepreneurial, like how to make that work. And then, you know, look, just as a broad thing, I tend to think that, you know, now more and more, we’re accelerating the future and everything scales with technology. So you need to have a technological foot forward. You need to have a, you know, whether it’s the National Research cloud, but how to kind of AI, how do we double down on the strength of our assets.

We have these amazing universities, we have amazing companies, we have an amazing entrepreneurial basis. Like deploy those and to help the rest of the country. Because like one of the things that I was mentioning earlier, is you say, well we strongly for our democracy need a return of the middle class jobs, that’s correct. We need to very much strengthen that. Well, one strand of those jobs is manufacturing jobs. I think our only hope to return to manufacturing is AI and robotics. So how to, like, let’s accelerate towards that so we get those kinds of manufacturing jobs growing again, as one instance among others within the US, as part of making that happen. That will then separately create entrepreneurial opportunities, but those are all kind of the scale of the kinds of things that the next president should actually, in fact, you know, be thinking about very seriously, and using the incredible podium and powers of the federal government in order to, you know, help build our future.

KORNBLUH: Wow. And Ash, I just want to turn back to this issue of China, because here in Washington, we’re pretty obsessed right now with China. And if we’re going to create the middle class jobs that Reid talks about, there’s a sense right now, at least coming from the administration, Adam Segal of CFR just wrote recently, that there’s a coming tech Cold War, if we stay on this current strategy, that would be blocking the flow of technology to China, and then reshoring some supply chains and reinvigorating US innovation. Others are talking about, you know, we’re so integrated, we should have a much more incremental approach. How do you see that dynamic playing into what what Reid just laid out? And, you know, do you take one of those two pads, you know, incremental or decoupling?

CARTER: Well decoupling is not our choice, Karen. The Chinese have already announced essentially, that they intend to be as autonomous as they can possibly be in all areas of technology that they intend to conduct digital life in China, according to Chinese political principles, which are different from our political principles. So this isn’t really our choice. This is a choice the Chinese have made and are making. Now, we’re gonna have to live alongside that just like we need to live alongside the Chinese military and Chinese companies and so forth, and not have the world war three, or God forbid, or even a Cold War, but stick up for ourselves and be competitive. Now, what I’d like to see from the next president in this area broadly, I think on China, we’ve got to elaborate this playbook. Tariffs are a tool in it, but we don’t really have a very subtle playbook yet for this non Soviet semi Cold War. But trading with the enemy kind of situation that’s so novel, I think, I know it’s in that playbook, but I don’t see us deploying that yet. In tech at large, Karen, a big picture, we need to land some of these planes. What mixture of self-regulation by companies and regulation by the government gets to the right place, let’s say in social media, so that they’re not cesspools. But, and is it twenty eighty percent, eighty twenty percent? And we need to settle that ball down, we need to settle this any trust fall down, that you see, there’s going to be hearing about the former social media next week, there’s going to be, there’s a lawsuit filed by the Department of Justice. And the second one, AI, same thing, I think there will be issues in which some combination of regulation by government and self-regulation by technologists will be optimal. And we need to get to that point of optimality. By the way, we have a bio revolution coming, which will make the digital revolution look like a piper and these moral terms. So Karen, I just think we need to land some of this stuff. We have had hearings, we’ve had, and we’re not getting anywhere in terms of getting to an equilibrium where we can go forward in the brisk way that Reid was talking about. So I hope the next president, gets to get, he can’t do this yourself, gets government industry together and say let’s settle these balls down a little bit. That’s an important part of being together and having it together and being strong.

KORNBLUH: Right.

HOFFMAN: Can I add one thing to Secretary Carter’s.

KORNBLUH: Yeah.

HOFFMAN: So one thing I think that people don’t get right about technology strategy is that what we’re trying to do as a country, everything from, you know, Joe Nye soft power to other things, is the projection of technological platforms. So some of what Secretary Carter said is absolutely right, which is, you know, China’s trying to build up its own entirely different base and so forth, and you know, that we have relatively little, you know, kind of optionality. But we do get to specific kinds of instances. So for example, autonomous vehicles, where, as far as I can tell, we are currently ahead in building an autonomous vehicle technology. If we say, well, we’re not going to allow any of that to, for example, go to China, because we have a worry about like autonomous tanks or something else, which I don’t think is actually in fact, the real big worry in these kinds of things.

CARTER: [INAUDIBLE]

HOFFMAN: Yeah, a little bit, I wasn’t actually putting words in your mouth. I know, you’re smart on these topics, I’m just making sure that everyone else knows it too. And so you want to actually, in fact, a central part of a national strategy, of a soft power strategy, of an industrial strategy is to get as much of the world to build on your technological platforms as you can rather than withdrawing them and retracting them. So you say we have an advantage in AI and building autonomous vehicles? Let’s build autonomous vehicles for the world. Right? And let’s have that be part of what’s multi stakeholder. And one of the things I’ve seen a lot of, especially in the last four years, has been the, no we hold on to it, versus the we have, as part of the, you know, the kind of American global order is that spreading of our technological platforms. And I think that’s super important on all levels from national security, industrial and humanity outcomes.

KORNBLUH: Great. So I’m gonna open the Q&A session. I’m going to invite members to join our conversation with their questions, remind folks that the meeting is on the record, and the operator will remind you how to join the question queue.

STAFF: (Gives queuing instructions) We will take the first question from Alan Silberstein. Mr. Silberstein, please accept the unmute now prompt.

Q: Yeah, it was an error. Sorry.

STAFF: We will take the next question from John Holden.

Q: That’s interesting. I didn’t realize I had pressed a button to ask the question. But I do want to congratulate the panelists for an absolutely scintillating and informative discussion. The question I have getting to Secretary Carter’s point is how do we generate a consensus or a movement towards a consensus when the national politics are as divided as they are? Any thoughts on how we move the ball in that direction?

CARTER: Can I just try on something, Karen, I think that there is oddly on this issue a little bit more consensus than there might seem to be on many other issues. It tends to be consensus around truculence towards China, and suspicion of China, which I think is, has a lot to say for it. But as Reid has been stressing, that isn’t a strategy. A strategy needs an offense as well as a defense. And an offense is for us to be better at technology, faster at technology, better at having allies, better and having all the rest of the world playing by our kinds of rules. That’s where we need to be dominant. And so I think if you take this public mood, which is you know China, I’m a little suspicious of China, and I have a kind of different feeling when I buy a Chinese toy now than I did ten years ago. I’m still kind of wondering where all this is going. I think that’s widespread and bipartisan, and that’s good. And I think we all ought to help leverage that little glimmer of non-divisiveness in a good direction. But as Reid says, it’s got to have an offense and not just a defense.

HOFFMAN: But I think you got it.

KORNBLUH: Go ahead.

HOFFMAN: Exactly. Next question. I think the Secretary gave it a great answer.

KORNBLUH: (Laugh) Should we got to the next question, Sara?

STAFF: We’ll take the next question from Rob Leonard.

Q: Hi, there. Rob Leonard with the Senate Defense Appropriations Committee. Thanks for a wonderful panel discussion. I wanted to ask the panelists to comment on the state of the technology in terms of kind of setting our realistic expectations in the near term for what we can expect, right. So the public discussion sometimes on AI is that we’ve got smart robots right around the corner. And, you know, I’m not dismissing the gains from machine learning, right, but I don’t know of a way around the four corners problems and kind of bigger, smarter AI applications. So I kind of wanted to get a sense from folks of what should we expect in the next, you know, three to five years in terms of technological gains and limits, I guess, in the AI space? Thank you.

LI: So I can try to take a stab at this. I particularly love questions that is geared towards de-hyping AI, and that happens a lot in the public. So this is a very sophisticated, nuanced question. So first of all, AI is a big salad bowl of technology. And the salad bowl includes speech recognition technology, computer vision technology, natural language processing technology, robotics technology, and a lot of machine learning driven data analytics. So to talk about, to assess this, it takes a layer of nuances, and we won’t have that time. But that big machine overlords coming next door is definitely more fiction than fact. Whenever people, you know, Reid talked about robot, robotics and manufacturing. When people ask me where robotics and AI is, I point them to DARPA’s robotic challenge video on YouTube. And if you just search DRC, DARPA Robotics Challenge, I think 2016, and you see all these massive robots trying to open a door and fail in the most miserable way and laughable way, it kind of shows you, it’s still a very difficult problem. Having said that, I’m not under estimating some of the rapid development. Open AI, Reid talked about, has incredible NLP technology for language model that is generating human like sentences. But if you look deeper under the hood, you start to see that these languages still lack some, a lot of the human nuance, human context, and some logical reasoning. So, we, what I think it’s really important next three to five years, is to have more public education, as well as policymaker education about what AI is, where this technology is, and to invest in that global multi stakeholder dialogue so that we all as a society, get to understand AI, build that trustworthy framework. And in the meantime, working to mitigate, even if the technology is not great, it’s still creating deep fakes, it’s still spreading disinformation. So we have to catch this moment to do both of these.

KORNBLUH: Great. Reid did you want to add to that?

HOFFMAN: Yeah, you know, Fei-Fei is one of the world experts on these things. So I, with a little trepidation, I go afterwards (Laugh). But the, you know, I think one of the things that also is the velocity. The velocity is very interesting. But what happens too often is people go oh, with this velocity, like, you know, back in the fifties, there’s all we have this velocity of AI, we’re gonna have AI, you know, what it’s now modernly, called AGI, artificial general intelligence and needed around the corner. The velocity is going to generate some really, really interesting thing. So roughly speaking, if you have data that call it tens of humans of lifetimes, you could study all the data and that’s machine accessible, it’s digital accessible, you can actually generate, I think, some surprisingly good outcomes. So for example, you know, you already today have, you know, visual recognition that can do skin cancer better than your average doctor, you know, there is this thing, you know, read radiology exams better than the average. Best is still better than human, but better than the average and then make that to your earlier question, available all throughout the world. And so all of that is actually in motion and the acceleration is going in very interesting ways. And so, that’s the kind of the way of doing the pattern forecast. On the other hand, the you know, oh look, the machines are going to be, you know, advancing the edge of physics. That’s not probably anytime soon, (Laugh) right, so anyway.

KORNBLUH: Sara, I guess we can take the next question.

STAFF: We will take the next question from Audrey Cronin.

Q: Hello, this is Audrey Kurth Cronin at American University. So I’d like to ask you about the headwinds to a multi stakeholder or ethical approach as you’re laying it out. There are two big areas where there’s huge political power. The first is in commercial development, because algorithms are proprietary because they’re valuable. And the second is in military competition. AI that has built in human control may be slower than your adversaries AI. So where are we going to get the kind of comparable political power, short of some kind of major accident?

KORNBLUH: Reid, do you want to try that?

HOFFMAN: All right, I’ll start and then obviously, very curious to hear from my co-panelists, especially Secretary Carter both. So part of the thing, and this may be stealing what Fei-Fei would otherwise say, is part of the whole thing about setting up these centers in universities, is to try to have them be network aggregators for technological expertise, intelligence, knowledge, and so forth. Then governments exercising good governance can then tap into and leverage for understanding those questions. Because, you know, obviously, right now, when you compare the private industries technological competence versus what’s going on within the government, you know, there’s this bit of a not the same playing field. Like and so the, you know, kind of government doesn’t know what to do, which is one reason they may do bad things, but also makes the doing the right things, the helpful things less there.

And that’s part of the reason why I personally have been putting a lot of energy into helping the university programs, especially Stanford spin up in order to do that. I also think that part of the reason why we, you know, we try to make sure that the right means are out there within expertise with the media and so forth, is so that people can kind of say, okay, that sounds right. Right? We should align behind that as another force for political, you know, kind of force. And then the last thing I would say is, I’ve also personally put in a bunch of time into trying to stand up, kind of 501c3 technological forces. Universities are obviously one of them, but like, I was on the board of Mozilla for over a decade, part of the reason I’m on the board of Open AI, you know, is to try to create these alternative forces that have different political calculus, because the commercial calculus, by the way, has good things, as well as some challenging things, so that you balance them out in this multi stakeholder arena so we can get to better utopic outcomes.

CARTER: You want me to add, Karen? That was an excellent answer.

KORNBLUH: Would you? I mean, you have so much experience both building up expertise in government, but also figuring out how to leverage expertise of Silicon Valley.

CARTER: Well Reid’s right. There’s obviously difficulty having any kind of specialized expertise in government. That doesn’t mean that intelligent policy can’t be made. It just means that you need outsiders to help you. By the way, I’m going to do a little bow in front of brother Reid here, because when I asked for help from him, and Eric Schmidt, and Jeff Bezos, and so forth, they gave it to me. And I was grateful for that. But it really answers Audrey’s question. Yeah, commercial interests are big money, is obviously big and human life, military things, because it has to do with physical human security is the most important thing. If you don’t have it, if you don’t have security, nothing else matters. So it is really, really important. But government is important too. I’m going to kind of stick up for the idea, government is not something out there that exists all for itself. Government is the way we do things that have to be done, that commercial interests don’t have any incentive to do, like build our roads, educate our children, defend us, and so forth.

And so it’s you know, why did I spend a lot of my time in my career? I’m a physicist, I’ve been a banker, I could have done lots of other things. It’s because I think it’s part of how we get good things done is working together. So Audrey’s right. I mean, for starters, Audrey, I think your government is one of those multi stakeholders. Your government at its best, and the way it ought to be, is a force for good where people are trying to do the good things that need to be done that can only be done by collective action. A lot of other good things can be done by the profit motive, by philanthropy, by just human beings being good human beings, a lot of religions, there are lots of good ways good things get done, but governments are one of them. So, Audrey, your question is really incisive. I actually have hope. I think, good people in the end, you kind of prevail. And the government may lumber along but at the end of the day, I always tell people, it’s the only government we got. You can’t walk down the street and shop for another one.

LI: Yeah, just quickly after that really, really inspiring answer, in healthcare industry, Audrey, we see multi stakeholder coming together. I don’t think it’s perfect. There’s definitely diverging interest. You know, sometimes we look at pharma insurance patients differently. But from where, I stand when AI is a piece of technology that can contribute to, you know, improving our healthcare delivery and decreasing costs and so on. I see doctors, patients, insurance companies and our hospital institutions coming together when the right kind of conversations and multi stakeholder convening happen. And that, working AI healthcare, just like Secretary Carter said, really gives me hope on a daily basis.

KORNBLUH: Great. Audrey, thank you for your question. What great answers. Sara, can you give us another question? I don’t think we’ll beat that one, but.

STAFF: We’ll take the next question from Esther Dyson.

KORNBLUH: Oh, we might, we might beat the last one.

Q: Hi. So I’d love to just hear from all of you. And unpacking, people talk, keep talking about AI being biased or having bad ethics or whatever, and so forth. But it also can reveal the biases by showing correlations we didn’t notice. And it would be encouraging to hear how it can have a positive effect on our ethics instead. And also how we can train babies as effectively as we train infant AIs.

LI: Oh (Laugh). So thank you, Esther, for that question. I’ll try to start first. First of all, I totally agree with you. I really do believe every tool that humanity has ever made is a double edged sword. It can be used for good and it can be used for bad, even a piece of rock or a piece of paper have that effect. So AI, when we talk about AI doing bad, AI being unfair, I think that language takes away the fundamental problem, which is the human responsibility, you know. We design and develop, and deploy, and use this. So in the case of AI bias, you’re totally right, we hear a lot, Reid and Secretary Carter talk about law enforcement and parole biases. And we see that in unfair financial decision makings, and of course, surveillance and all that. But in the meantime, we can use this to call out biases. One of my favorite example, a couple of years ago is a group of researcher using face recognition, especially gender recognition, to call out the bias of Hollywood movies.

They use AI to run through hundreds and hundreds of movies that humans cannot possibly go through, and show that male actors get about two thirds of screen time or talk time, compared to female actors. That is a very powerful demonstration of machine learning, calling our bias and giving us hope to mitigate that. Another example in medicine again, AI, when we look at retinal pathology images, AI, as Reid was mentioning earlier, can start doing things better than doctors or are on par with doctors calling out different diseases. But we have researchers showing that let’s be careful of that because AI can also tell genders, and that is supposed to be a private information from our patients. So by using AI to expose these issues and give us a chance to improve, it’s a great use of AI. And once again, one more example in COVID time, we all worry about hand washing. It turns out, even before COVID, hospital acquired infection is a really big issue. It’s a number three killer of our American patients more than car accidents every year, and that is largely due to lack of handwashing compliance by clinicians, a lot of times unintentional. It turns out using smart sensors, we can actually help doctors and nurses to be reminded or keep track of their hand hygiene compliance. And that takes away human bias. It takes away hiring a human monitor standing next to patient rooms to call out different people, because there’s just so many errors we can introduce. So these are just few examples of positive uses of AI and especially in the bias setting.

HOFFMAN: Fei-Fei said it very well. I agree.

CARTER: I agree. It’s measure countermeasure and AI can help on both sides. It can help cure some of its own ills, detect deep fakes, defeat people who are using AI for cyber-attacks. So it can play on both sides of the moral wall. Sorry, Reid did I interrupt you?

HOFFMAN: No, I’m just unplugging my phone.

KORNBLUH: Great. So unfortunately, I think this is going to be the last question. This has been such a rich conversation. I’m sad to say that. But there’s going to be a reception after for those who are interested. Sara, why don’t you give us the last question.

STAFF: We will take the last question from Scott Malcomson.

Q: Hi. Scott Malcomson with Strategic Insight Group and author of Splinternet. Among the Democrats, there’s a lot of enthusiasm now for a league of techno democracies, as distinct from techno authoritarians, similar idea in Britain. I was expecting it to come up in the course of this conversation, and it hasn’t. What do you see is the pluses and minuses of that approach?

KORNBLUH: Do you want to go Secretary Carter?

CARTER: I don’t know that much about it. Certainly sounds good to me. I think we need to stand up for our values and that our values are embedded in technology. And if that’s the joke, I get it. And so count me in. I’m not familiar with that particular organization, but sounds right to me.

KORNBLUH: Why don’t I, I’ll focus it a little bit, because I’d love to hear your thoughts on this. I mean, there’s some sense that we, by blowing up some of our alliances, we have weakened our own ability to spread our values and our norms. You know, can we solve some of the disputes we’ve been having with Europe and our other allies to maybe spread some norms about AI? Anybody who wants to talk about at.

HOFFMAN: I think this is one of the reasons why, you know, the kind of U.S. retreats from globalization is not just bad for us, in the country, but also bad for the world. Because I actually think that the question is saying, there’s a whole set of American values that we think are great for humanity, that get embodied in like, okay, so, you know, how should tech, you know, as Fei-Fei was saying, there are, it’s always a sword. How is the sword develop? How is it used? You know, how are the sword spread? You know, that kind of thing. And so, you know, I think part of these questions, like, for example, what the right way of putting the human in the loop is, and what are the ways that’s really essential, is I think, some of the, and how much should technology not be easily deployed in the hands of, you know, kind of autocratic or aggressive regimes, right? I think is also kind of an important thing. And I think all of that is based on our global position. And so I think it’s important to have that kind of leadership. Now, part of that also listen, right. Like, I think that, you know, for example, Europeans have a lot of good perspectives that we should always be collaborating with them on. And, you know, I feel that’s another thing that was lost in the last four years.

KORNBLUH: Anybody else?

CARTER: Amen to that. I think people tend to think that our, that these alliances are some gift we give to foreigners. They’re not. Militarily, they’re force multipliers. And that’s a good thing. But more generally, if you’re a business person operating in the world, don’t you want to have the American rule of law at your back? Don’t you want to have the most of the country’s not run by autocrats who are going to negotiate with you essentially, coercively? Don’t you want a free market playing field [INAUDIBLE]? So you know, it’s not just something for geostrategic type people to think about. If you’re doing business around the world, it matters whose values are prevailing internationally, and I think we’ve always stood for values that translate into the commercial world. And companies depend upon them. I don’t think we should take them for granted.

LI: Yeah

KORNBLUH: Go, go ahead, please.

LI: No, I just want to add to Secretary Carter and Reid. I think it does take more than virtual to stand up to authoritarianism. And it’s important that we recognize that our values are not just moral good, it is actually our competitive advantage. And this is why we at Stanford care so much about human value and human centered AI because we actually have a tremendous foundation, right. We have a society that wants to respect human rights, we want to be inclusive, we want to use AI or technology for good, we can have a culture of transparency and accountability, we can form multi stakeholder allegiance to both push for the innovation, but also put the right guardrails. And these kind of foundations in our world is our competitive advantage. And in the long run, I think if we invest in this, we will have the better technology to help our society.

KORNBLUH: Well, with those three incredibly inspiring remarks, I want to thank you for joining today’s virtual meeting. Thank you to Secretary Carter, Reid Hoffman, and Fei-Fei Li. Please note that the audio and transcript of today’s meeting will be posted on CFR’s website, and we hope to see all of you at our CFR virtual reception starting momentarily at 5:15 pm. Thank you to our panel very, very much.

(END)

Malcolm and Carolyn Wiener Lecture on Science and Technology: The Future of AI, Ethics, and Defense

Speakers

- Director, Belfer Center for Science and International Affairs, Harvard Kennedy School; Former U.S. Secretary of Defense (2015–2017); Member, Board of Directors, Council on Foreign Relations

- Partner, Greylock Partners; Cofounder, LinkedIn; CFR Member

- Sequoia Professor, Computer Science Department, and Denning Codirector, Institute for Human-Centered Artificial Intelligence (HAI), Stanford University; CFR Member

Presider

- Senior Fellow and Director, Digital Innovation and Democracy Initiative, German Marshall Fund of the United States; CFR Member