Hacking and the Internet of Things

Event date

Speakers

Presider

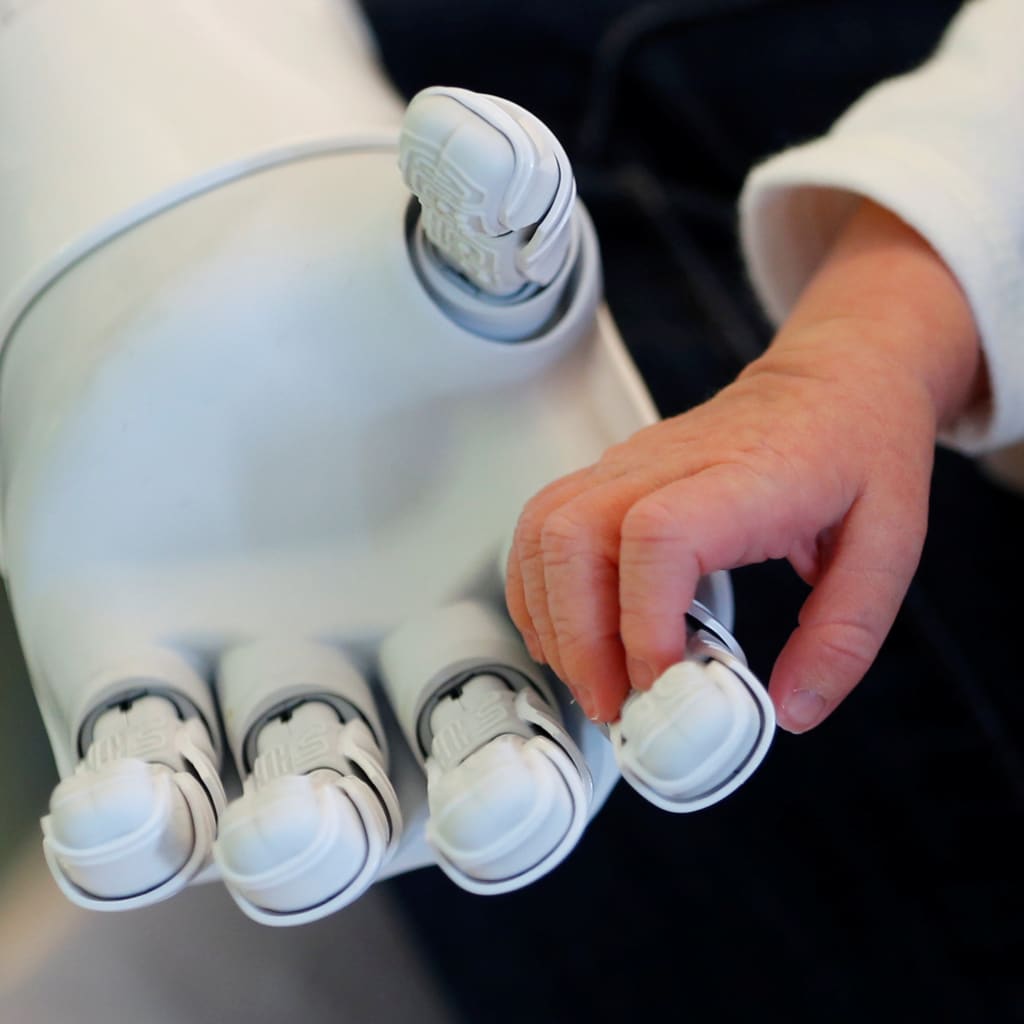

One of the most likely targets of cyberattacks in the digital age is the “Internet of Things” (IoT)—everyday objects that run through internet connections. Pacemakers, vehicles, and appliances are all at risk of being hacked at home and abroad. Panelists discuss the national security risks posed by corruption of IoT devices and ways to mitigate the probability of successful attacks.

TIMBERG: All right. Good afternoon, everybody. Welcome to today’s Council on Foreign Relations meeting on hacking and the Internet of Things. We have a distinguished panel with us here today. I’m Craig Timberg. I’m the national technology reporter at the Washington Post. I’ve been covering privacy, security, and what I think of as technology behaving badly since 2012. And I’ve come to learn that when conversations involve the word “internet” and “security,” they tend not to go that well for everybody. So I can’t promise this will be uplifting, but I can promise that we will try to make it spirited and illuminating.

And in pursuit of that, I want to introduce the people to my right. Starting right here, this is Rob Knake. He’s a former White House cybersecurity official. He’s now a senior fellow at the Council on Foreign Relations. This is Niloofar Razi Howe. Did I get it?

HOWE: Perfect.

TIMBERG: Right. I’m told she will answer to Niloo. She is the—

HOWE: (Laughs.) Anything you call me.

TIMBERG: Yeah, yeah. She is the former chief global strategy officer at RSA Security. She’s also worked in various parts of the government and corporate world. And she can talk about national security issues, as I guess all of them can. And then Beau Woods, on the far, is the cybersecurity innovation fellow at the Atlantic Council. And he has some expertise in energy systems and transportation systems. And so we’ll try to move broadly through a lot of subjects.

But first, I want to allow each of them just to introduce themselves for a minute or two, and just tell us what’s on your mind as we start this conversation.

KNAKE: Sure.

TIMBERG: Let me start with you, Rob.

KNAKE: OK. So I’m actually at an optimistic point when it comes to cybersecurity. When I look at the overall trends, I think we’re actually heading in the right directions. Things are better than they were ten years ago. Ten years before that, you might not have been able to say that. so overall, we’re heading in the right direction. The cloud is actually making us more secure. The iPhone that somebody’s picking up and taking a photo of right now—(laughter)—is far more secure than a computing device at NSA in, say, 1995. We’re starting to figure out how to do security.

What gives me a moment of pause is IoT, where we have not learned any of the lessons from PCs from the 1990s and applied them today. And it’s worse, because there are billions of them, and billions more are growing. So the optimistic part is we actually probably know how to secure these devices. The pessimistic part is, we’re not doing it.

HOWE: So I would love to inject a little bit of optimism and context for the conversation as well. I think when we talk about IoT, Rob’s absolutely right in terms of the security threat that it represents. But there’s a reason why it’s propagating so fast. And it’s because of the tremendous benefits that can be driven by IoT. On the low end, we expect in the next few years over $2 trillion of economic benefit, up to double digit amounts of global economic benefit—$11 and $15 trillion of economic benefit—and in a wide variety of sectors, right? Manufacturing, transportation, utilities, agriculture, health care.

A lot of times the headlines are taken by consumer wearable devices and smart home, but that’s actually not where most of the value is going to be driven. Seventy to 80 percent of the value is going to be driven in business-to-business applications. And when you think about the goodness that creates from a humanistic perspective, it’s pretty tremendous. So we’re talking about increasing energy efficiency. We’re talking about reducing water consumption and fertilizer usage for allowing greater food production as our arable land decreases. We’re talking about using augmented reality for physical therapy, for stroke therapy, when people can’t get to a place. Augmented reality for parents to be able to interact in the NICU with newborns who are very, very ill and can’t have human touch. So the use cases for this are actually pretty tremendous. And that’s why everyone gets so excited about IoT.

The second part of the context is we’re absolutely at the front end of the innovation curve. So this is just starting. And the truth is that, you know, we’re just starting to realize what the benefits are going to be. But what we can expect is that the innovation is only going to accelerate. So wherever we sit today, with respect to IoT, and these devices and sensors in the ecosystem, we’re just at the starting line. And we can sort of try and imagine where this goes, but we’re not entirely positive about it.

Now, the complicating factor is when we talk about security we’re not talking about the sensor or the device. This is actually an ecosystem play. And some people say it’s an ecosystem of ecosystem plays, right? So you’re talking about the firmware, the software, the cloud storage, the analytics that goes with it from the data that’s being created. You’re talking about the networking and the communication infrastructure. So that one little device, you can secure it but it won’t mean anything if the entire ecosystem is insecure, and if it’s not part of a secure ecosystem—broader ecosystem.

For example, a European casino was recently hacked through the thermostat in its fish tank, right? So that little thermostat in the fish tank has its own ecosystem that it’s a part of, but it’s a part of thousands of other devices that create the bigger ecosystem. And that’s part of what makes this so complex, is that we’re not just talking about securing a desktop or a server, like we were in the ‘90s. We’re actually talking about securing the ecosystem. And the weakest link is going to be where the vulnerability comes in.

Another part of the complexity is today the economic benefit for security is pretty low, right? The cost isn’t disintermediated. I don’t expect my fridge to scam me. But it could be used to take down Netflix. So, you know, the value of security isn’t well understood by the consumer and by the end users. And, frankly, the manufacturers don’t necessarily have the incentives in place. But the economic benefit of embracing IoT is very well understood. And in fact, McKinsey just put out a report—it happened to arrive in my inbox yesterday—that showed that companies that aggressively pursue IoT applications and pilots tend to drive three times as much drive from IoT than companies that don’t. So very little economic incentive to secure, huge economic incentive to embrace. Which kind of brings us to where we are.

TIMBERG: And, Beau, you want to take a minute?

WOODS: Sure. So one of the hats that I wear occasionally, when the government is not shutdown, is I’m entrepreneur in residence at the Food and Drug Administration. And in that health care role, I see a lot of people whose lives are absolutely transformed by IoT devices—by infusion pumps, insulin pumps, implanted pacemakers that can connect to the internet and report back to their doctor and let them know when something’s going to be coming up. So there’s a huge amount of both economic and societal benefit to Internet of Things, if we get it right.

But I’m also cognizant that we’re becoming over-dependent on undependable technology. So over the last five years or so, we’ve spent globally about a trillion dollars on people, product, services for cybersecurity. And yet, if you measure failure by breaches of corporations, we’re failing at about 100 percent rate. Now, I don’t think either of those numbers is a good portent for what would happen as we import that technology into systems that we already trust and that we depend on every day.

So what happens if the health care industry has an extra trillion dollars stacked on top of it over five years? That’s pretty hefty. What happens if the failure rate increases of medical devices because we’ve basically put undependable things in them? And that’s what worries me, is that we won’t reach the promise because we get hamstrung by the peril that can come.

We are on the front end of this technology curve. And we haven’t discovered all the future benefits, like you said. We also have discovered all the future downsides. And I worry that we’re in kind of an awkward teenage age with technology, with these technologies in particular, where we are adopting them because we see the promise, we see the benefit, but before we can secure them.

Which means we’re going to have ten, twenty, thirty years, in some cases, for these devices to live when they are both vulnerable and exposed to adversaries at varying capability levels, even down to the level of I can download a tool, click go, and create a botnet of my own that can do things like take down major corporations, kick them offline. Or use the IoT devices that are connected to infiltrate deeper into the organization. And the same types of technologies that are in a home router are also in medical devices. They’re also in cars. They’re also in these other things.

So essentially we’re concentrating risk on a smaller number of undependable components. And doing that by placing them in places where we have to have high reliability. And so I’m also an optimist like everybody else, I think, on the panel.

TIMBERG: No, I’m not an optimist. (Laughter.)

WOODS: Oh, good.

TIMBERG: To become a journalist, we don’t come in the optimistic flavor. (Laughter.)

WOODS: I’m an optimist, but I think I’m only an optimist if we can disseminate the information that we already know about, you know, the few things we know to do right—really right. But more importantly, the many, many things we know to easily fail. And right now, like Rob said, we’re not learning the lessons that we already learned in the ’90s in PCs and in corporate networks.

TIMBERG: So let me start with kind of a definitional question. I mean, Internet of Things. It kind of sounds like it could be everything. But you don’t actually mean my iPhone, which by a dictionary definition is a thing, or my laptop computer, which by dictionary definition is a thing. So what gets counted as an Internet of Thing? Are we talking about my car, my refrigerator, my insulin pump? How do we characterize this?

Rob, why don’t you take a stab at that.

KNAKE: So this is not an IoT device—(laughter)—but it could be. There are now thermoses that—

TIMBERG: It will be. When we do this five years from now that an IoT device.

KNAKE: No, you can go out and buy this from—I think is it an Indiegogo project, or something like that, that is funded—to essentially take a water bottle for hot and cold beverages and maintain its heat at the appropriate temperature and report that data back to you, to your iPhone. So that’s—the whole concept is the idea of taking really, really cheap sensors and really, really cheap mobile chips, and using them to put sensors in places we never would have put them before—which has massive benefits if they are inside your heart, or in your artificial limbs. Maybe less so if they are your light bulbs, though I understand Niloo really likes her IoT light bulbs.

But that’s what we’re talking about with the Internet of Things. It’s the spreading of sensors and communications technology to places we never would have put it before.

TIMBERG: But why not my phone, right? Thirty, forty years ago I wouldn’t have thought of my phone as having a sensor, so why is the dividing line there? Is it because I have a more direct relationship with it?

WOODS: Yeah. So there’s many ways you can break this up, what’s the distinction between those. But I see at least three or four fundamental differences between Internet of Things and traditional computing and networking. That is, consequences are different. So the consequences, if your—if your laptop crashes are very different than if your car crashes because of a security issue, right? The timelines are very different. So on one hand, medical devices will be in hospitals for decades. The average lifespan of a car, I think, is something like eleven years on the road. So they’re much, much longer lived.

On the other hand, the time scales with which the consequences manifest are much, much shorter. They’re more real time. You also have different capabilities that you can—you can bring to bear. So while your laptop today doesn’t control the physical work, IoT does. There’s some control element of that. And there’s also different contexts. So when you’re in a corporate environment you’ve got an IT team that can help with all of the security issues that you have. When you’re driving your car down the road or when you’re flying a plane through the air, where is the IT team? Where is the support staff? It’s basically you, and you’re kind of busy doing—

TIMBERG: I’m hoping that’s true with an airplane. I’m hoping I’m not in charge of that.

WOODS: Well, hopefully you’re not in charge of that. But for the pilots and for the doctors in hospitals, and for the other people who are specialists at operating this equipment, they have less computer security training than, obviously, the average IT staff.

TIMBERG: I see. I’m going to break down everyone’s optimism. So how many of these things are part of the Internet of Things? I hear there’s more of them than there are of us. Niloo, you want to take a stab?

HOWE: So there’s about seven-point-six billion humans in the world. We—you know, as of last year there were close to nine billion, you know, Internet of Things. Projected to be—

TIMBERG: And how many of them are hackable?

HOWE: —twenty billion. Hundred percent probably are—

TIMBERG: OK. (Laughs.)

HOWE: I mean, look, at the end of the day if someone wants to hack something, and they’re completely focused on it, they can probably figure out how to get in. But how many of them have any kind of security, any kind of reasonable security—as the California legislation is requiring? Something like six out of seven home routers don’t—have outdated firmware. So a large portion of these are not secure—not even not secure by design, but they have known vulnerabilities. I think the projection is that by 2025 the average person’s going to have about 4,000 interactions a day with the Internet of Things.

And, you know, the good news is we have a little bit of time here. So when 5G arrives as a communications infrastructure, that’s when we have to be really worried, because 5G is going to enable the massive sort of propagation of the Internet of Things because of the particular—because what it enables is faster. It has very low latency, which is what you need for autonomous cars, for example. And it is designed to allow you to attack a lot of things to it, which 4G is not. So it’s not around the corner, but we have to have this date in mind, because when 5G gets here, this thing’s going to really explode.

TIMBERG: Right. And so—to go back to the example of my iPhone, which we agree is not really a part of the Internet of Things, but it gets updated every few weeks by Apple. Is that happening with the gazillion Internet of Things things out there?

WOODS: No.

TIMBERG: No. (Laughter.) Are any of them getting updated? And why is that a problem, if they’re not being updated?

WOODS: So there’s kind of a—many, many links in the chain of why things aren’t being updated. And if any one of those breaks, then you don’t have an end product that’s updated. So you have source component software, little packages in libraries that get pulled into the building of the thing to begin with. You have the manufacturer—the kind of file goods manufacturer who then would have to update that source component from their repository. Then you push it out to a consumer or, in some cases, a middle man that would then have to adopt the update. So if you think about, like, the Android ecosystem. It doesn’t matter what Google publishes. It’s up to Kyocera to update your handset. Or Kyocera and T-Mobile. In some cases, there’s a longer chain. And then it’s often up to the individual consumer to hit the button that says “update.” And even in highly managed environments, like hospitals, somewhere along that chain breaks down. So if you look at home routers, six out of seven are updated. That’s really good compared to hospitals and medical devices.

TIMBERG: OK. So there’s more of these things than there are of us. They are not well secured and they’re not getting updated with security patches. OK. Now what kinds of actual things have happened in the Internet of Things world? Rob, I know you’ve written a lot about botnets. Do you want to take a stab at this one?

KNAKE: Sure. So botnets, right, are networks of computers that have been turned into bots, controlled by a bot master, for malicious purposes. Every kind of malicious purpose you can think of on the internet. Everything for propagating spam to carrying out ransomware attacks, to carrying out distributed denial of service attacks, flooding attacks. Ten years ago usually these were compromised home computers. You didn’t update your Windows XP machine, it gets infected. It becomes part of a botnet. And it starts pushing out traffic to, say, take down the website of JPMorgan.

Fast forward to today, most of the botnets we’re seeing aren’t affecting home computers. They are affecting IoT devices, which are undefended, often directly accessible over the internet, and can be taken over very easily and formed into these very large botnets. The biggest case recently were the 2016 Mirai attacks, which ended up—the Mirai malware was written by just a bunch of—they were Rutgers University students. This was not a science experiment. This was a criminal enterprise. But they were at Rutgers.

HOWE: And it was Minecraft, right?

KNAKE: And it was—right. What they were doing was they were actually forming a botnet, so they could take out servers for the computer game Minecraft. This is a very competitive world gaming. And so this actually happens all the time. Most DDoS attacks in the United States, I believe, are actually targeted at gaming servers. So this is the sort of bad effect that I focused on. The things that can happen to your privacy from a botnet are bad to you, from a compromised IoT device, are bad to you. The things that your compromised IoT device can do to the rest of the internet, to major corporations, to everybody else, are where I think we have a real public policy failure. We don’t know how to handle the problem that there are bad people doing things to nice computing devices to cause a problem for a third party. And I think that’s the biggest public policy challenge in this area.

TIMBERG: And Niloo, are there any national security implications of this at this point? Or is this all in the future and theoretical?

HOWE: No, of course there’s huge national security implications. I mean, if you think about health care, if you think about the grid, if you think about, again, all these—whether it’s DDoSing critical infrastructures, as has happened to financial services institutions, whether it’s taking down the grid—there are real national security implications. And there has to be a sort of national conversation from a strategy perspective. But we also can’t really wait for that, because I do think that, again, some of this is Groundhog Day, right? Like, you are reliving 1995. No default username and password. At least a smart approach to default username and password. I mean, we talked about this in the 1990s.

So there’s a lot of lessons that we learned twenty years ago that we really should be able to apply to this situation. I mean, one of the most important to me is we cannot put humans at the center of security posture. If we’re relying on people to actually change the passwords, to download, configure, patch, all that stuff, we know we’re terrible at this, right? Our operating system is pretty flawed in terms of dealing with this age of rapid innovation. And so we’ve got to take the human out of the mix. And we have to automate as much of this as possible. That’s the end state we need to go to, especially in a world where these things outnumber the people by an order of magnitude, which is where we’re headed to.

So the lessons are very clearly there. We need to develop a policy. We need to develop a strategy. And from a crawl, walk, run approach, we’re not even crawling today. So there’s some low-hanging fruit that we can—we can implement pretty fast.

TIMBERG: So we’ve talked a little bit about the things that have happened so far—the DDoS attacks and such. Beau, if we look out five, ten years—you deal with transportation and energy grids—what could go wrong in five or ten years?

WOODS: What could possibly go wrong?

TIMBERG: Just give us an example.

WOODS: You know, with the Internet of Things—Dan Geer likes to say, on the internet every psychopath is your next-door neighbor. Now we’re connecting that psychopath to things that we depend on critically—like energy sector, like transportation, like health care. What could possibly go wrong with that? I would like to say that I’m optimistic that we’ll have it all fixed in the next five or ten years at a policy level. But the reality is, even if you fix it at the policy level from some magic wand, which doesn’t exist, right—we don’t have the fixes in mind yet. We’ll have to build them. But the research and development time, the lead time and the legacy systems are going to carry us through the next few decades. So I think five to ten years from now is going to look like today, only more.

HOWE: And can I just add one thing, from a national security perspective? So when it comes to national strategy, it’s not just about security. It’s also about R&D, as you said. And who’s going to own the innovation when it comes to all sorts of technologies that we care a lot about—whether it’s quantum computing, artificial intelligence, or frankly even the Internet of Things? So when you look at drone technology, for example, China has the lead on drone technology because they are funding the companies that are developing this technology. And the U.S. companies cannot compete from a price performance perspective, because they have to raise venture money—or money from somewhere—in order to innovate.

So we just have to be very thoughtful as we look at this. The innovation is going to happen. It’s going to transform the way we work, live, and play. And as these billions of devices are going out there, who do we want to be owning the front end of that innovation curve? And that’s something we should also be thinking about.

TIMBERG: So that brings me to another question. If we agree there’s a problem here that’s likely to get worse, for all the reasons we’ve been discussing, who should be taking this on? The hardware makers? The software makers? The U.S. government? The United Nations? The hackers of the world? Rob, you were in the White House, tell us, what’s the solution here?

KNAKE: So I think the real answer is to figure out how we assign liability, right? The typical answer is we want to assign liability to the criminals behind this act, right? And so in the case of the Mirai botnet, they were dumb kids in New Jersey. We were able to do the forensics on it, figure out who they were, attribute it to them, and arrest them, and they’re going to go to jail. That’s usually not what happens in the cyber underworld, because we don’t have the ability to reach cyber criminals in Ukraine, Russia, China, the list goes on and on. Even if we can do the attribution and figure it out, getting the individual back to the United States and putting them in jail is something that we don’t have the greatest record at.

That’s not to say it’s not possible. That’s not to say that it doesn’t happen. I see a whole bunch of ex-DOJ colleagues of mine in the back of the room. You guys do a great job. But that’s not ultimately the solution. And so we’ve got to figure out, how do we place liability somewhere else in the chain? I would say on an international perspective, we need to take an approach where we’re willing to assign liability, and accept liability, as a nation when U.S. computers are used to attack France. We should have some liability and some responsibility for figuring that problem out.

TIMBERG: So, but as a country, not as the companies that make those computers, or the software makers who make the software that runs those computers, or all of the above?

KNAKE: I would say you need to go down that line. I think one of the laws that we need to fix is we need to have a way that we, as consumers, can hold device-makers responsible. I also think we need to have a legal construct in which we can hold the device-makers, the ISPs, and possibly the companies that are operating insecure devices liable, if those insecure devices are harming a third party, right? So in the case of the botnet attacks against the financial services community in 2011, ’12, and ’13, it was servers operated by companies like GoDaddy out of Texas that were compromised and were DDoSing the banks. Under our current construct, we would never hold GoDaddy or their users who had insecure WordPress installations liable. I think we probably need to extend liability to those people in order to solve the problem. It doesn’t seem fair to many people. And I agree maybe it’s not fair. But I think it would be effective.

TIMBERG: Niloo—oh, sorry—Beau, go ahead.

WOODS: Yeah, I think—so there’s two industries that call their users “users,” are software and illegal drugs. (Laughter.) Those two industries are also exempt from liability in the U.S. So, you know, you can make a connection if you want to. There are better ways and worse ways to impose liability on device makers and on operators. And I think that what we need to have—what we need to start with is to have an open and honest dialogue on where liability should be placed in the supply chain and chain of operations.

HOWE: So I think, before we start imposing regulation on a nascent industry where technology is going to move much faster than policymakers can possibly keep up with, we should think about the tools that are available and at our disposal, right? So when you look at botnets, for example, the number-one country where botnets come out of is Brazil. And it’s because it’s a safe haven for cyber criminal activity. We have some pretty good incentives we could probably use to get Brazil to start taking this stuff a little bit more serious. We can use a carrot and stick approach. The victim, by the way, is not—number one—is not the U.S. It’s a shared problem globally across EU, U.K., Asia, and the U.S. So we can come together and start using some pretty big sticks to discourage criminal activity.

The second thing I would say is when we decide to do it, we actually do get it done. So we took down Silk Road. And since then, we’ve been able to take down almost every massive underground cybercriminal hub, because the world is aligned to do that, right? We take them down. Now, typically the userbase is in the hundreds of thousands, which is kind of crazy when you think about it, right? The mob, when you had a RICO action, it’d be, what, like a handful of people, a dozen people? We’re talking about hundreds of thousands of users that are coming together. But they sell things that we know are bad. They sell drugs. They sell weapons. They sell child pornography. And we’re going to take them down every single time.

So I do think that if we were aligned, and there was a global incentive to do it, we have shown that we can actually take down these criminal networks, and we can solve the attribution problem. And I think it goes beyond liability in the private sector. I think it’s—clearly, the private sector has to own their piece of this. Consumers probably need to at some point wake up to their piece of it. But I do think the government has to take responsibility as well. And it needs to own its piece of it, not just for the IoT ecosystem, but cybersecurity in general. And when the government steps up and does it, I think it’s the single-most powerful tool that we have. And without the government getting involved, I think it’s very hard to solve this problem.

TIMBERG: So before we came on the stage, Rob was telling me about another approach. Was it IoT chemotherapy, is that right? And that I believe he was advocating, right? I mean, hackers of the world take it into their own hands, am I right? Sort of vigilantes?

KNAKE: So, to be clear, I don’t think I was advocating anything. I think this is an interesting idea. (Laughter.) It was probably three or four years ago that the security community started to notice that somebody was going around and bricking IoT devices, purposely breaking them so they could not be infected and taken over by bot masters. And about a year after that, allegedly the person who was behind it said, OK, I’m going to stop doing this. It’s probably too dangerous. I’m not sure it’s a good approach. But this is something that governments might want to think about doing. Not as an individual vigilante.

It’s an interesting concept. If you have a device that is either already a part of a botnet or is susceptible to being part of a botnet because it has a known vulnerability that can be exploited over the internet, it may be worth thinking about whether it makes sense to take those devices offline.

TIMBERG: Isn’t there a Fourth Amendment issue with that, for if the government is going into people’s devices and extracting information, and acting on them without legal process?

KNAKE: You’re the only lawyer we have on the table.

HOWE: I was an entertainment lawyer like twenty years ago, so. (Laughter.)

TIMBERG: It seems like that might be an issue.

WOODS: With that approach, you don’t have to take information off. You can just disable the device completely. That’s it. It’s a different approach.

TIMBERG: I think when you talk about hackers doing it, I was thinking OK. If the government does it—that’s—I would think you would need legal process of some sort.

KNAKE: Well, if a hacker does it, it’s definitely a crime.

TIMBERG: No, I get that, but—

KNAKE: So I think it’s an interesting question about how do we manage these devices that nobody else seems to manage? And could you come up with a construct in which you say: This device has been effectively abandoned?

TIMBERG: And to be clear, you’re not actually advocating this. I was teasing. You’re just raising this as an idea.

KNAKE: Raising this as an idea.

TIMBERG: But I was curious what you—

HOWE: This issue goes beyond IoT, though, right? Like, when Microsoft stops supporting some, you know, NOS, and people keep using it, we end up with situations like we did with WannaCry and NotPetya. So the issue of outdated firmware, outdated software, unpatched systems is much broader than IoT. And it’s a heretical approach. (Laughter.)

TIMBERG: Well, on that note, so it’s 1:00. We’re going to open this up to our members here. You can join the conversation. I want to remind everybody, this is all on the record. It’s being livestreamed over the internet. I believe there’s some journalists here. So I’m going to call on people. You’re going to wait for the microphone, please. And then please stand and state your name, and your affiliation, and ask only one question. Keep it concise. And so we can allow as many people to participate as possible. Why don’t we start with the lady right here? If we can get a microphone to her, that would be great.

Q: I’m Mitzi Wertheim with the Naval Postgraduate School.

I don’t have any computer skills, but by God do I understand how complicated it is, because I worked with Art Cebrowski when he was getting the whole thing going in the Defense Department. My question is for our journalist. Hearing what we heard today, how can you create a visual story so the rest of the company—country can understand how incredibly complex this is?

TIMBERG: I’ll take a stab at that. My answer is going to be something like I don’t know, but I did a five-part series in 2015 about the internet and why it’s—was it—yeah, 2015—about why it’s so insecure, going back to the early days at the Defense Department, the way it was originally engineered, and took it up through, you know, various phases, including the car hacking of today, but also looked at the Internet of Things and how insecure it was. And one of the guys I interviewed for that was Linus Torvalds, who created the Linux operating system, which is actually in a lot of these IoT devices. So we take a stab at it.

I will tell you that the journalistic kind of business environment has become a lot less kind to sort of big and weighty things that aren’t directly connected to kind of news of the moment. We write endlessly about what’s going on with the White House. It is really, really hard to do big and complicated these days. We do it. The Post does a lot of it. But, you know, it used to be that, you know, we decided what was important, and we put it on the front page, and we threw it out in front of people’s houses every day. And it’s just—the world doesn’t work like that anymore. So it is a lot harder than it used to be, I think, to get—to take on something that complex and do it well. And to do it visually is—the tools are better than they’ve ever been, but, man, it is really difficult.

Q: Maybe the Atlantic should do it.

TIMBERG: The Atlantic is great. I’m a huge fan. Why don’t we—no, I’m pointing right at you. Yeah.

Q: Thanks. Mariam Baksh, Inside Cybersecurity.

So I was hoping, whoever wants to take it, I know that Niloofar mentioned a California bill. I wanted to get you guys to say how you see that potentially driving national action on IoT security in the same why that CCPA, the California privacy bill, has done it for national legislation around privacy. And this is my way of sneaking in a part B, whether the procurement bill from Senators Warner and Gardner might be a good starting place for national legislation.

WOODS: I’ll take that one. People are looking at me, so. (Laughter.) I’ve actually looked at both of the pieces of legislation you’re talking about. Representative Kelly on the House side also introduced one in December that was essentially an updated version of the Warner-Gardner bill. So part of—I’ll start with the California bill. The key clauses there, as you alluded to, are necessary—or, appropriate and reasonable controls. And essentially in the prelude to the bill, what they said is we already have laws that address these things for everyone else in the supply chain and the chain of delivery, except the manufacturers. So we’re rectifying that loophole in the law.

There were a couple of provisions in there around passwords that were specific. They were meant as examples rather than either a safe harbor or a requirement. Because, again, going back to it, it’s appropriate and reasonable. And that’s the standard that they intend to apply. With the Gardner-Warner bill, the Kelly bill, and I think Lieu was the co-sponsor of that one, it’s how can government use its power of the purse, its procurement capability, in order to drive and shape better options for the market, for them first and foremost, but for the rest of the nation, secondly? And if you look at the Kelly bill which is, again, updated, the provisions in there shouldn’t be very controversial.

Do you take help from people when they find something and report it to you in good faith, right? Coordinated vulnerability disclosure program. Do you avoid conditions that will allow mass propagation of attacks, you know, by having reasonably sound authentication password policies? And do you allow these systems to be updated—I believe are the three provisions that remain in that one. So it should be very uncontroversial. A lot of the organizations that would throw a fit have already had a chance to weight in, and this is the result of that. So I’m fairly optimistic that that might go into practice, where it’s not already today. Because a lot of organizations already do that. If you look at the Mayo Clinic, for instance, in the private sector, they’re doing a ton around procurement. And that’s becoming a de facto standard for the entire health care ecosystem. So there’s some great capabilities that lie with that power of the purse, whether it comes from government or private sector.

KNAKE: Can I just jump in?

TIMBERG: Yeah, please.

KNAKE: So the California bill is great in the sense that California is taking the lead, when the federal government has not. The danger is, the text of the bill is about this big. It really does not define what these terms mean, what is reasonable security. And so it’s going to be left to the courts to figure this out. I don’t know about you. I’m not sure that a California state judge getting this issue is going to be able to make that determination. So I really would like the state of California to think about how they could both set a floor and a ceiling, how they could say you need to do these things. If you do these things, and you do them well, and your device is still compromised, you will not be held liable. That there should be some kind of safe harbor provision. And I think if we construct laws like that, to address Niloo’s concern, you can then avoid the threat that this kind of regulation will stifle innovation. I actually think it could help innovation if people knew that these kinds of devices have met basic standards, and defined standards, and testable standards.

HOWE: So if I could just add to that. Yes, and the positive side of that language is because the technology is evolving so fast, because we can’t predict where it’s headed and what the issues are going to be, I maybe have more faith in the judicial system than I do in the policymaking and legislative process to be able to sort through the issues real time. And the problem is just the timeline sometimes for developing policy and regulations is so much slower than the timeline for technology and the social norms associated with it that it’s just really hard to keep up with. So by having broad language, you can ensure that you’re not setting standards that will be irrelevant five years from now.

And I do think getting the standard-setting bodies involved with setting the standards is a really important part of this, right? Having IEEE UL-like standards that are set for certain types of devices, especially—and it has to be targeted by industry, because the risk is very different in health care than it is, for example, in agriculture, than it is in utilities, than it is in consumer. So there’s a whole lot of work that has to go into this. I’m personally OK with the breadth of the language, because I think it allows it to evolve as the ecosystem evolves and as the threats evolve.

TIMBERG: Right there. I’ll remind everyone to stand, and your name and affiliation—

Q: Hey. Jeremy Young with Al Jazeera’s Investigative Unit.

I’m wondering if we know how the Internet of Things is being exploited for espionage purposes, and are there any examples or stories that you’re familiar with? And is it safe to assume that the U.S. is in a position of leadership on that issue, or not?

TIMBERG: Who wants to take that one? (Laughter.)

WOODS: I’ll jump on the grenade. (Laughter.) So I don’t remember who it was earlier that referenced the casino that was hacked into by the thermostat. And as I understand it, that was for criminal espionage purposes. They wanted to get information on the organization and some of the high rollers behind it. I know I’ve read in the past where some of the U.S. intelligence agencies have said they love the Internet of Things because it’s going to be great to spy on people through their toothbrush. Now, to the degree—the degree to which they’re actually doing that today, I don’t know.

But what worries me is that if we know that that capability exists, how long will it be—and we may have already seen it. I think I saw something about this—but before those technologies and capabilities are banned from any military or national security apparatus, and their families’ homes, and what message does that send to the rest of us to say how trustworthy these things are, if our government won’t trust them, or corporations won’t trust them in people’s homes?

HOWE: Well, I mean, there’s already examples out there in the media about, you know, your fitness devices, right, geolocating you, and getting hacked for that purpose. So just like everything else, I mean, you cellphone has a—has a camera, and it has a microphone that can be turned on remotely, even when the phone is off. So I don’t think the threat is that different than the threat that exists today, with all the devices that are out there. It’s just that there are so many of them and they’re all over the place. And that’s the piece of—and they’re generating so much data. And where that data goes, and where it’s stored, and the analytics you apply against it is just as much of an issue.

And we haven’t even touched on GDPR, and the GDPR implications of—with respect to all the data that’s being generated from these devices. But I can’t imagine that it is not a great playground for all sorts of nation-state actors.

Q: What is GDPR?

WOODS: General Data Protection Rule.

TIMBERG: It’s the European data privacy—

Q: Thank you.

HOWE: Sorry. Apologies.

TIMBERG: It’s a law that took effect last year. It has had the effect of extending all sorts of privacy protection to everybody in the world, because all of these companies want to be able to operate in Europe. And so it’s forced every company you can think of to be more careful about what it collects, and how long it keeps stuff, and why. I think is that—did I get that close enough?

HOWE: And the right to be forgotten.

TIMBERG: Yeah. Oh, it also includes the right to be forgotten, which is another controversial measure.

Do we have another question from the crowd here? Right here.

Q: I’m Audrey Kurth Cronin, director of the Center for Security and New Technology at American University.

Actually, my question relates very much to what we’re talking about right now. I was wondering if you were going to talk about the fact that the reason—one of the reasons why three times as much value derives from the Internet of Things for companies is that they’re also collecting a tremendous amount of incredibly valuable data. So how do we get ahead of not just the financial disincentive to incorporate security into devices, but also the financial incentive to collect data that the provenance of which we’re still a little bit wondering about in the United States?

TIMBERG: Rob, do you want to take a stab at that one?

KNAKE: I have an odd perspective on this. I think it was the CEO of Ford got really excited about the idea that they were going to collect all this data as they put IoT into their cars, and that they could monetize this data. And then somebody pointed out that, you know, a low-end Ford Focus sells for, like, $20,000 a year at a pretty healthy profit margin. And that the data-sellers on the internet operate at a much lower level. So I think we may have overstated the value of collecting all this data. Move over, as we collect more and more data, I think the value of it’s going to go down, because there’s going to be just so much of it.

Now, from a policy perspective, I think what we need are some rules for consumers about disclosure that are much stronger than they are today. I think we have a problem with EULAs. If I could create a rule, it would be pretty simple. Before you click on an End User Agreement, you should have to sit there for the time it would take an average person to actually read through that agreement. And if we did that, we would probably start to recognize that we were signing our lives away every time we add a new service or plug in a new device.

WOODS: So I’ve also got a slightly different take on this. If you—if you remember the Equifax breach, the first communication that Equifax sent is: Don’t worry, nothing important got lost. And they were right, because that was a communication to their shareholders, and they don’t care about customer data as a business asset. They only care about the analytics that derive from that information. Now, the fact that all of our personal information is out there already, from one of the various, you know, hundred breaches that we hear about every year should say, A, we’re really bad about protecting information. B, how much is there left to lose at this point, right?

So is there value still in collecting all the information? And if so, then why are we spending so much political and economic capital on sequestering it as if it’s, you know, the new oil, as we’ve heard? And how—why are we spending so much on information we know has already leaked?

TIMBERG: Questions from members? Back there.

Q: Hello. I’m Jonathan Lee with McKinsey and Company.

This question maybe is best posed to Rob, but I welcome anybody’s responses. Could you talk a bit about what the U.S. government is currently doing to address some of these issues with the Congress, the executive branch? And what are the sorts of things that perhaps the government is not doing that you would like to see be taken?

KNAKE: So NTIA at the Commerce Department put out, I think, what is the best report on botnets that I’ve seen anywhere, and to include the one that they published last fall. I think it really was excellent, excellent work by a community. And I think that that has set a basis for bringing together the community of technologists, policymakers, and regulators to start addressing this issue. So I think that was a really positive first step. And I think it came out of maybe the first or the second executive order that President Trump signed after taking office. So that was a first good step. Let’s study it.

The next step, I think, is really to figure out how we start implementing some of those recommendations, and how we start building out the apparatuses that we need, particularly internationally, to handle these kinds of crises. Every time that a botnet forms and the U.S. government or a tech company thinks about taking it down, organizing a takedown where they will go out on a multifaceted approach, try an disable it, arrest the end users. Every time this happens, it is an ad hoc global effort. So we need the kind of apparatuses if we’re going to just simply try and mange this issue that we don’t have today that would be international, and national, and within the technology community. And that needs to be a standing process. That would be my biggest focus, that I think the government should tackle.

WOODS: Yeah. I’ll add, you know, Allan Friedman is in the room, but he can’t talk about his work because he’s furloughed. He’s with the NTIA. And there’s a number of multi-stakeholder groups that have been pulled together to look at some of the really thorny issues with IoT. And so I think that’s a great venue, because it’s essentially industry and others talking with each other to come up with consensus standards or guidance that then is not a government regulation but serves as a—maybe a lighthouse for others who are looking for that type of information.

Secondly, I think we’ve seen, you know, certainly with the FDA—I’m a little bit biased—but the FDA has done a great job of—as an agency, at the agency level—getting ahead of some of the issues. Took them a while to ramp up, but now that they are over the last five years they’ve really transformed the medical device ecosystem to where you have manufacturers proactively being much, much better at cybersecurity than others.

And then third, we’ve seen a lot of proposed legislation, both in the U.S. at the national and state level as well as abroad, and some adoption. And I think that that will only continue until some of these IoT bills pass. I don’t know which one it’s going to be. I’ve got—there’s a lot out there. So I think one of those will come into play. The question is—and I think you would agree with this—is that going to be one of the good ones that doesn’t artificially restrain IoT and it does the right thing, you know, is actually going to be effective? Or is it one that just has good political support because it comes at an opportune moment, after a big breach or something, and it’s not the regulation or the law that we need?

HOWE: So just two thoughts. One, we need to make sure that however we regulate it doesn’t crush the startup ecosystem that needs to develop around this and give a(n) unfair advantage to very large platform players in this business, because we want to make sure that innovation happens.

The second thing I would say is from a government perspective there’s one thing only the government can do, and that’s deterrence, right? And the government’s got to step up its game when it comes to deterrence because we can’t do that in the private sector.

TIMBERG: What does that include? Does that include hacking back?

HOWE: So it’s probably not hacking back. The term of art would probably be a little bit different than that. But I—but I do—you know, look, there’s tons of tools of state power that can be used. You can use sanctions, for example. And we talked about countries that safe harbor cyber criminal activity; there’s lots of sanctions you can use. You can escalate to—from economic to military repercussions. But it doesn’t have to be—it doesn’t have to be hacking back. I think there’s—there is carrot-and-stick approach that we can use.

But at the end of the day, private industry does not own deterrence. It cannot stop criminal activity. It cannot stop state-sponsored activity. In fact, when the DDoS attacks happened on the financial service sector in 2011—

KNAKE: ’11, ’12, ’13—

HOWE: —the White House came out and said, sorry, we’re not going to help you with this, right, which to me is insane because it was a state-sponsored attack, and how can industry be left to its own devices? I mean, it can deal with the particular issue at hand, but it can’t deal with the larger issue. So I do think that the things that only government can do—industry can self-regulate. You can come up with standards. You can come up with agreements. But we cannot do deterrence.

TIMBERG: Questions from members?

Q: Ooh, sorry.

TIMBERG: Dropping the mic even before you start. (Laughter.)

Q: OK. Well, Ariola Vee (ph) from the Defense Department.

I wanted to (go over ?) a little bit on some of the questions. We talk a lot about how we regulate the industry, but—(comes on mic)—how do we also educate consumers, especially young people who are growing up? So right now schools are talking about how you educate them to be smart online consumers—to see fake ads, to understand bullying. Also, those in the room who may be new to some of these topics, what are, one, ways we can educate the consumer population to also be a force for change? And, two, if you were a smart consumer, where would you go for some of this information to keep up with all these changes?

KNAKE: So I’ll jump on that one. I mean, we’ve solved that problem. We have Cybersecurity Awareness Month every October. (Laughter.) I mean, I say that glibly, but I—no, I mean it.

I don’t think awareness and education is our way out of this problem. I don’t think that we should expect every consumer to become an IT manager of their home, of their car, of their lightbulbs. I think we’ve got to create economic models where somebody else does that.

So maybe fifteen years ago Neal Pollard, when he was a fellow here, wrote a paper that I think was titled, you know, what are you going to do when your toaster attacks you. And it sort of envisioned the problem that we’re seeing today before we’d even coined the term Internet of Things.

One of the answers to the what do you do when your toaster attacks you may be toast as a service. It may be the idea that if you’re going to have an IoT toaster—(laughter)—

TIMBERG: On drones.

WOODS: On drones with blockchain. (Laughter.)

KNAKE: —you pay, you know, a few dollars a month for that toaster and somebody manages it. And of course, you know, I think companies really like this, right? Microsoft actually really likes software as a service rather than selling you a new box with software inside it every couple of years. I don’t think we’re going to get there for toasters until we get to a point at which we are assigning the liability for that toaster doing something bad either to the company or to the individual owner of the toaster. But I think the real answer has got to be make it somebody else’s problem.

TIMBERG: Does anyone else want to speak up for consumer education as a—as a value in this?

WOODS: So I will, partially. So I like the idea of consumer education and awareness. I think it has to be—I think, you know, training kids is great, but if you want to make a change having some awareness campaign at the retail level, when people go to buy things, is probably a better way to influence that. It’s a better nudge. So working with some of the retailers and some of the manufacturers, putting, you know, cards up at the point of sale, is a good option. I’ve been talking with some of the retailers about that. And they like it because then it decreases the number of returns that they get, it decreases the number of questions they get on the floor. If you try to find somebody at one of the big-box retailers to answer questions about security of IoT devices, you’ll be lost, right? So that’s one way.

But I’d also say, you know, to flip it around a little bit, we’re talking about giving more education and awareness to kids in elementary school than is required in computer science curriculum. And that is terrifying to me because when the people who are creating these things don’t know how to build them securely, haven’t been trained and reinforced on that and incentivized to do so in industry, that’s a huge problem.

And also, most of the people making some of the really low-level Kickstarter-, Indiegogo-sized things don’t have a formal computer science background. So how do we reach those folks so that it dampens the need for the consumer awareness and education training, right? We’ve spent sixty, seventy years in computer security, in computer science, basically—I’m a former IT guy so I can say this—blame the user as the problem. Stupid user, right? User error. “Problem exists between keyboard and chair” is a classic. (Laughter.)

I think we need to flip that paradigm. I think we need to be better at building and engineering these systems so that they are more like a car, where you just get in, turn it on, and use it. In a car you need a driver’s license because it’s a public safety hazard to be out on the road. So maybe in some of the public safety hazard situations there’s licensing. That’s a five-, ten-year-off conversation, I think. But making us adapt to machines kind of seems like the dystopian future we don’t want.

TIMBERG: We have time for one or two more questions, or—anyone who has not asked a question so far who wants to jump in before I go back? I guess I’m going to go back to you.

Q: Yay. Thank you.

Just since you talked about the takedown of botnets, maybe you can talk about concerns in the bills coming out of folks like Graham and Blumenthal that would allow the Justice Department to take down botnets. There are some concerns from privacy folks that that would impinge on, you know, free speech, or—I guess free speech folks. Do you support those bills? I can’t remember the exact name of it, but there is—it’s supported by Graham, Blumenthal, people like Whitehouse on the Democratic side, that would allow the Justice Department to take down botnets. Maybe you can speak about the concept, if not the particular bills.

KNAKE: So, I mean, when I talk to people in the Department of Justice about them, I think these are actually fairly narrowly constructed bills that would solve actual problems in these takedown processes. So the way to understand this is there’s basically a gap in the law. If you are a botnet, and all you are doing is taking over somebody’s computer and pumping bad traffic out to take down somebody else’s website, that may not actually be a crime. It’s only a crime if that botnet is violating the Computer Fraud and Abuse Act’s provisions about listening and collecting data, sort of wiretapping. And so my understanding is that these bills are trying to fix that so that the Department of Justice can say, no, you know, the crime here is this thing; it’s simply that the computer has been taken over illegally.

I think that’s a fairly narrow solution. I don’t see a privacy concern with it. If there are other concerns—(audio break)—

WOODS: (In progress following audio break)—and spam sending takedowns happen today anyway. It just takes a while. It can take, you know, months to years to get those done. I’m not familiar with the specific pieces of legislation, but if they can accelerate the takedown without unintended side effects it seems like a good idea.

TIMBERG: And I take it the risk, though, is that you’re empowering the U.S. government in a way that does not necessarily have legal review to be turning people’s computers off.

KNAKE: I don’t think—I don’t think that’s the case. I think when we’ve seen these takedowns done effectively they are many months in the planning. There is judicial review. There are warrants. These are legal processes. And I think in many ways the frustration I’ve had looking at takedowns is it is such a cumbersome process; that we go through these waves of saying, OK, we’re going to try and do a takedown a month.

When I was in government I remember I think it was Joe Demmer (sp) at the FBI came and said we’re going to do a takedown every single month. We have a whole campaign. We have a whole plan. And then I think in a two-year period they managed to do two takedowns. That wasn’t because they weren’t resourced. It wasn’t because they weren’t trying. It wasn’t because they weren’t technical. They were all those things. But the coordination and the legal machinations enabled to do that turned out to be a lot harder than they thought it would be.

TIMBERG: And is that because it’s difficult to identify who would appear in court to defend their computers or potentially their botnets against a government action?

KNAKE: So, I mean, my view is that the takedown challenge really is a problem of coordinating all those aspects at once if you’re going to do it effectively. Microsoft has done a lot on their own, and I think they’ve been less effective than the ones that will involve, say, something like the National Cyber-Forensics (and) Training Alliance out of Pittsburgh serving as this third-party coordinator of the takedown, and then bringing in the Secret Service, the FBI, Microsoft, the ISPs, independent botnet researchers, Interpol, you know, the French police, you name it, and coordinating that kind of activity. We don’t have anybody who owns that coordinating function on an ongoing basis.

TIMBERG: Well, on that note, we’re done. It’s 1:30. Thank you all for being here. If you’d like to thank our panelists for being with us today. (Applause.) Thanks very much to all three of you.

HOWE: Thank you.

TIMBERG: And appreciate you all coming out to join us today.

WOODS: Thanks.

HOWE: Thanks.

KNAKE: Thanks for doing that.

(END)